Agile Data Warehousing Service User Guide

Agile Data Warehousing Service User Guide

Page #1

Agile Data Warehousing Service User

Guide

Document publish date: 06/04/15

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #2

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

PROPRIETARY AND CONFIDENTIAL INFORMATION. This document may

not be disclosed to any third party, reproduced, modified or distributed without

the prior written permission of GoodData Corporation.

GOODDATA CORPORATION PROVIDES THIS DOCUMENTATION AS-IS

AND WITHOUT WARRANTY, AND TO THE MAXIMUM EXTENT

PERMITTED, GOODDATA CORPORATION DISCLAIMS ALL IMPLIED

WARRANTIES, INCLUDING WITHOUT LIMITATION THE IMPLIED

WARRANTIES OF MERCHANTABILITY, NON-INFRINGEMENT AND

FITNESS FOR A PARTICULAR PURPOSE.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #3

Table of Contents

Table of Contents

3

Getting Started with Data Warehouse

8

Data Warehouse and Vertica

9

Project Hierarchy

9

Key Terminology

9

Data Warehouse Quick Start Guide

12

Prerequisites

12

Creating a Data Warehouse Instance

12

Reviewing Your Data Warehouse Instances

15

Connecting to Data Warehouse from CloudConnect

17

Connecting to Data Warehouse from a SQL Client Tool

18

Deprovision Your Data Warehouse Instance

19

Data Warehouse Management Guide

20

Managing your Data Warehouse Instances

20

Data Warehouse Instance Details Page

20

Managing Users and Access Rights

22

Data Warehouse User Roles

22

Adding a User in Data Warehouse

23

Getting a List of Data Warehouse Users

26

Data Warehouse User Details

28

Changing a User Role in the Data Warehouse Instance

28

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #4

Removing a User from Data Warehouse

29

Resource Limitations

30

Data Warehouse Backups

30

Data Warehouse Developer Guide

32

Data Warehouse System Architecture Overview

32

Data Warehouse and the GoodData Platform Data Flow

32

Data Warehouse Architecture

33

Data Warehouse Technology

35

Column Storage and Compression in Data Warehouse

35

Data Warehouse Logical and Physical Model

36

Intended Usage for Data Warehouse

38

Working with Data Warehouse from CloudConnect

38

Creating a Connection between CloudConnect and Data Warehouse

Loading Data through CloudConnect to Data Warehouse

38

41

Project Parameters for Data Warehouse

42

Creating Tables in Data Warehouse from CloudConnect

42

Loading Data to Data Warehouse Staging Tables through

CloudConnect

44

Merging Data from Data Warehouse Staging Tables to Production

46

Exporting Data from Data Warehouse using CloudConnect

48

Connecting to Data Warehouse from SQL Client Tools

Download the JDBC Driver

Data Warehouse Driver Version

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

50

51

51

Agile Data Warehousing Service User Guide

Page #5

Prepare the JDBC connection string

52

Access Data Warehouse From SQuirrel SQL

53

Connecting to Data Warehouse from Java

57

Connecting to Data Warehouse from JRuby

57

Install JRuby

57

Access Data Warehouse using the Sequel library

58

Installing database connectivity to Ruby

59

Installing the Data Warehouse JRuby support

59

Example Ruby code for Data Warehouse

59

Database Schema Design

Logical Schema Design - tables and views

60

61

Primary and Foreign Keys

61

Altering Logical Schema

62

Physical Schema Design - projections

62

Columns Encoding and Compression

63

Columns Sort Order

64

Segmentation

64

Configuring the initial superprojection with CREATE TABLE

command

65

Creating a New Projection with CREATE PROJECTION command

66

Changing Physical Schema

68

Loading Data into Data Warehouse

69

Loading Compressed Data

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

71

Agile Data Warehousing Service User Guide

Page #6

Use RFC 4180 Compliant CSV files for upload

71

Error Handling

72

Merging Data Using Staging Tables

73

Statistics Collection

74

Querying Data Warehouse

76

Performance Tips

77

Do Not Overnormalize Your Schema

77

Use Run Length Encoding (RLE)

78

Use the EXPLAIN Keyword

78

Use Monitoring Tables

78

Write Large Data Updates Directly to Disk

80

Avoid Unnecessary UPDATEs

81

General Projection Design Tips

81

Minimize Network Joins

82

Choose Projection Sorting Criteria

85

Limitations and Differences in Data Warehouse from Other RDBMS

87

Single Schema per Data Warehouse Instance

87

No Vertica Admin Access

87

Use COPY FROM LOCAL to Load Data

88

Limited Parameters of the COPY Command

88

Limited Access to System Tables

89

Limited Access to System Functions

92

Reserved Entity Names

93

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #7

Database Designer Tool not available

93

JDBC Driver Limitations

93

Data Warehouse API Reference

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

100

Agile Data Warehousing Service User Guide

Page #8

Getting Started with Data Warehouse

GoodData Agile Data Warehousing Service (Data Warehouse) is a fully

managed, columnar data warehousing service for the GoodData platform.

l

Agile Data Warehousing Service may be known to some customers by its

former name, Data Storage Service. See A Note about Names.

Data Warehouse is designed for storage of the full history of your business data

and for easy and quick data extracts. Using standard technologies, you can

quickly deliver Data Warehouse data into information marts (such as GoodData

projects) or other information delivery systems.

In this cloud-based service, Data Warehouse instances can be provisioned and

managed through scripts or through the GoodData gray pages.

l

l

l

For more information about managing your instance, see Data

Warehouse Management Guide.

For more information about the GoodData APIs, see GoodData

API Documentation.

The gray pages are a simple web wrapper over the GoodData APIs. For

more information, see https://developer.gooddata.com/article/accessinggray-pages-for-a-project.

Using a provided JDBC driver and SQL queries, a developer may interact with a

created Data Warehouse instance through CloudConnect Designer or a locally

installed SQL client tool.

l

A default schema is created for you when an instance is created. For more

information on accessing Data Warehouse, best practices for schema

design, and loading and extracting data, see Data Warehouse Developer

Guide.

A Note about Names:

Some customers may be familiar with Data Warehouse under its former name,

Data Storage Service. This document may contain references to "Data Storage

Service" or "DSS". Most of these references occur in code snippets, which have

not been updated to the new name.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #9

Data Warehouse and Vertica

Data Warehouse is running on top of an HP Vertica backend, which enables

developers to use the scalability of Vertica’s distributed massively parallel (MPP)

columnar engine and powerful SQL extensions.

Version in Use: HP Vertica 6.1.

Project Hierarchy

Your projects are organized into dashboards, dashboard tabs, reports, and the

metrics that are contained within those reports. At the lowest level, facts,

attributes, and source data represent the foundational components that are

aggregated to form the metrics displayed in dashboard reports.

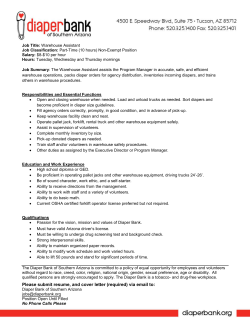

Figure: Project Hierarchy

Key Terminology

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #10

Term

Definition

Data

Raw records that are loaded into project 1, IBM, $50000,

data sets for use in the project’s data

10/10/2012

model.

Facts

Individual numerical measurements

attached to each data set in the source

data.

Facts are always numbers and are the

smallest units of data.

Attributes

Descriptors used to break apart metrics

and provide context to report data.

Attributes dictate how metrics are

calculated and represented. Attributes

may be text (e.g. region) or numerical

(e.g. size) data.

Examples

Opportunity amount

(i.e. $25,000)

Campaign clicks (i.e.

212);

Website views (i.e.

4,508)

(by) month;

(by) store;

(by) employee;

(by) region;

(by) department

Metrics

Aggregations of facts or counts of

distinct attribute values, which are

represented as numbers in reports.

Metrics are defined by customizable

aggregation formulas.

Metrics represent what is being

measured in a report.

Reports

sum of sales;

average salary;

total costs;

count of Opportunity

(attribute)

Visualizations of data that fall into one of A table showing

three categories: tables, charts, and

employee salaries

headline reports.

(metric) broken down

by quarter (attribute)

All reports contain at least one metric

(what is being measured), and often

A line graph showing

contain one or more attributes (dictating revenue (metric)

how that metric is broken down).

generated across each

month in the past year

(attribute)

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #11

A bar graph showing

sales figures (metric)

broken down by region

(attribute)

Dashboard

Tabs

The pages in the GoodData Portal in

which reports (either tables or charts)

and other dashboard elements (lines,

embedded content from the web,

widgets, and filters) are displayed.

ROI

Funnel/Goals

Dashboard tabs are typically used to

organize reports within a given

dashboard.

Dashboards Groups of one or more dashboard tabs

that contain reports belonging to a

common category of interest.

From Leads to Won

Deals

Projects

Sales Management

A set of dashboards and the users who

have permission to interact with them. A

project also includes the underlying

dashboard, tabs, reports, metrics, and

data models.

(marketing dashboard)

Leads to Cash

Subscription

Management

Projects are often provisioned for use by

an entire team or department. In these

cases, a change made by one user is

visible to all.

Data Set

A collection of related facts and

attributes typically provided from a

single data source.

An Opportunity data

set, containing facts

related to attributes

like Name, Opportunity

Amount, and Stage.

Logical

Data Model

(LDM)

A model of the definition of all facts,

attributes, and datasets in a project, as

well as the relationships between them.

To see an example,

click Model in the

Manage page of the

GoodData Portal.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #12

Data Warehouse Quick Start Guide

Prerequisites

To get started using Agile Data Warehousing Service, please verify that you have

the following in place:

1. A GoodData platform account. If you are already accessing the GoodData

Portal, you need these credentials in your Data Warehouse implementation.

2. Data Warehouse-enabled authorization token. Your existing project

authorization token must be enhanced by GoodData Customer Support to

enable the creation and management of your Data Warehouse instances.

3. Application access. This Quick Start Guide covers Java-based graphical

database client tools such as SQuirrel SQL and GoodData’s CloudConnect

Designer application. You can also connect to Data Warehouse

programmatically from Java, JRuby, or other Java-based languages and

platforms.

4. (Optional) GoodData project as a target. If you plan to load the data from

Data Warehouse into the GoodData platform, you must have the

Administrator role for at least one GoodData project. For more information

on GoodData projects, see Project Hierarchy.

Creating a Data Warehouse Instance

Use the steps in this section to initialize a new Data Warehouse instance.

In most environments, only one Data Warehouse instance is needed. An Data

Warehouse instance can receive data from multiple sources and can be used to

populate one or more GoodData projects (datamarts), which deliver the

information to business users.

l

You may find it useful to maintain separate instances for development,

testing, and production uses.

To initialize a new Data Warehouse instance, you must provide the following

information:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

Name of the Data Warehouse instance

l

Description (optional)

l

Authorization token

Page #13

NOTE: Your project authorization token must be enabled

to create Data Warehouse instances. Please file a request

with GoodData Customer Support.

Steps:

To create your Data Warehouse from the gray pages, please complete the

following steps:

1. Login to the GoodData Portal:

https://secure.gooddata.com

2. If you are logged in, reload the page, which refreshes your session.

3. Navigate to the following URL:

https://secure.gooddata.com/gdc/datawarehouse/instances

4. If you have not logged into the GoodData Portal previously, you must enter

your credentials first. Navigate to the above URL after logging in.

5. The gray page for creating an Data Warehouse instance is displayed. Any

previously created Data Warehouse instances are displayed above the

form.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #14

Figure: Create an Data Warehouse instance

6. Enter the name, description and project authorization token for your

instance into the form. Click Create.

7. In rare cases, you may receive the following error message. If so, please

refresh the page opened to the GoodData Portal:

This server could not verify that you are authorized to

access the document requested. Either you supplied the wrong

credentials (e.g., bad password), or your browser doesn't

understand how to supply the credentials required.Please see

Authenticating to the GoodData API for details.

8. The task is queued for execution in the platform. You may use the link in the

gray page to query the status of this task. Reload the page until you see the

following:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #15

Figure: Completed task of Data Warehouse instance creation

9. Click the link to access your Data Warehouse instance. See Data

Warehouse Instance Details Page.

A default schema is created for you in the new instance.

Reviewing Your Data Warehouse Instances

After you have created Data Warehouse instances, you may access them through

the gray pages:

https://secure.gooddata.com/gdc/datawarehouse/instances

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #16

Figure: List of Data Warehouse Instances

Each Data Warehouse record includes basic information, including links to the

Data Warehouse Detail page for the instance and to a page for managing users

who can access the Data Warehouse.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

l

Page #17

For more information on the Data Warehouse Detail page, see ADS User

Details.

For more information on managing users, see Managing Users and Access

Rights.

Data Warehouse Status:

The status field identifies the current status of the instance:

l

ENABLED - available and ready for read-write operations

l

DELETED - a deleted instance.

l

ERROR - an instance that failed to be created.

Connecting to Data Warehouse from CloudConnect

Using the JDBC driver, you can create integrations between CloudConnect

Designer and your Data Warehouse instance.Then, using CloudConnect

components you can create ETL graphs to load data into your Data Warehouse

instance, transform it within the database using SQL, and extract it from Data

Warehouse for use in your GoodData projects.

l

l

l

CloudConnect Designer is a downloadable application for building ETL

graphs and logical data models for your GoodData projects. For more

information on CloudConnect Designer, see Developer Tools.

To get started building your first GoodData project, see Developer Tools.

If you have already installed it, please upgrade CloudConnect Designer to

the most recent version of CloudConnect Designer. The driver is provided

as part of the upgrade package.

Steps:

1. Create CloudConnect connection for your Data Warehouse instance. See

Creating a Connection between CloudConnect and Data Warehouse.

2. Your connection must include the appropriate JDBC connection string. See

Prepare the JDBC connection string.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #18

3. After the connection is established, you can begin loading data into Data

Warehouse from CloudConnect.

4. For your project, you should create project parameters for username,

password, and JDBC string. See Project Parameters for Data Warehouse.

5. You can create tables using a CREATE TABLE statement using the

DBExecute component. See Creating Tables in Data Warehouse from

CloudConnect.

NOTE:Data Warehouse does not support upsert

operations and does not enforce unique row identifiers at

load time.

6. Data is loaded through CloudConnect by using the COPY LOCAL

command in the DBExecute component. See Loading Data to Data

Warehouse Staging Tables through CloudConnect.

7. When data has been loaded into the staging tables, you can perform any

necessary in-database operations. Data can then be merged into your

production tables. See Merging Data from Data Warehouse Staging Tables

to Production.

8. When data is ready to be moved from Data Warehouse to the datamart, you

can extract it using the DBInputTable component and pass the metadata

into a GD Dataset Writer component for storage in your GoodData project.

See Exporting Data from Data Warehouse using CloudConnect.

Connecting to Data Warehouse from a SQL Client Tool

Data Warehouse supports connection from Java based SQL client tools such as

SQuirrel SQL using the GoodData JDBC driver. To connect, please complete the

following steps.

Steps:

1. Download and install the JDBC driver. See Download the JDBC Driver.

2. Prepare the connection string. See Prepare the JDBC connection string.

3. Create the connection from your favorite Java-based SQL tool. Example

documentation is provided for the following:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

Page #19

See Access Data Warehouse From SQuirrel SQL.

Deprovision Your Data Warehouse Instance

If needed, you can deprovision an Data Warehouse instance.

l

l

When an instance is deleted, it is still listed as one of your Data Warehouse

instances. However, its status is marked as DELETED.

Deleted instances are still visible through the gray pages. However, data

cannot be added to or removed from the instance using the JDBC driver.

Deprovisioning an instance removes it from access

through the JDBC driver. Before you deprovision an

instance, you should review all of the users and projects

that are connected to the instance. All users should be

informed of the change in advance of removing the

instance, so that they can verify that none of their projects

is affected. Also, you should make arrangements so that

any project using the Data Warehouse instance has access

to data through another resource, such as a replacement

Data Warehouse instance.

Deleting an instance cannot be undone.

Steps:

1. Visit your list of instances:

https://secure.gooddata.com/gdc/datawarehouse/instances

2. Click the instance you wish to remove.

3. Then, click Delete.

4. The status of the instance is changed: DELETED.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #20

Data Warehouse Management Guide

This section provides guidance in how to manage your Data Warehouse

instances and the users in those instances through the GoodData gray pages.

l

The gray pages reflect the structure of the underlying GoodData APIs. The

commands you execute through the gray pages can be managed

programmatically through the APIs.

Data Warehouse users are specific to the instances in which you create them.

They are not equivalent to platform users.

Managing your Data Warehouse Instances

Through the gray pages, you can review your Data Warehouse instances and

manage aspects of them.

Topics:

l

Creating an Data Warehouse Instance

l

Reviewing Your Data Warehouse Instances

l

Deprovision Your Data Warehouse Instance

l

Data Warehouse Instance Details Page

Data Warehouse Instance Details Page

You may access the details of individual Data Warehouse instances through the

self links in the List of Data Warehouse Instances or through direct URI:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #21

Figure: Your created Data Warehouse instance

Key URLs:

You may wish to retain the following URLs for later use in the gray pages:

l

l

self - The URL to the Data Warehouse instance is used to construct a

JDBC connection string required to connect to your Data Warehouse

instance using CloudConnect or other Java-based tool.

users - URL to list of users in the Data Warehouse instance

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

Page #22

jdbc - URL to the JDBC access point for the Data Warehouse instance,

which is used internally by the JDBC driver to establish a database

connection. See Connecting to Data Warehouse from a SQL Client Tool.

Managing Users and Access Rights

This section provides details on how to manage Data Warehouse users and their

permissions within your instance.

NOTE: You must have GoodData platform account before you

may be added as a new user to an Data Warehouse instance.

Topics:

l

Data Warehouse User Roles

l

Adding a User in Data Warehouse

l

Get List of Data Warehouse Users

l

Data Warehouse User Details

l

Change a User’s Role in the Data Warehouse Instance

l

Removing a User from Data Warehouse

Data Warehouse User Roles

The following roles may be assigned to Data Warehouse users. General

permissions are listed for each role:

Data Admin role

Role identifier: dataAdmin

This role should be assigned to any Data Warehouse user who needs to use the

instance for loading and processing data.

l

Read all tables or views.

l

Import data into Data Warehouse tables.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

Create, drop or purge Data Warehouse tables.

l

Create other objects such as functions and views in the database.

Page #23

NOTE: The Data Admin role is sufficient for basic use of the

Data Warehouse instance.

Admin role

Role identifier: admin

This role should be reserved for the user or users who need to have control over

the other users in the Data Warehouse instance.

l

All permissions of the Data Admin role, plus the following:

l

Add user.

l

Remove user. User cannot be the Owner of the instance.

l

l

Change a user’s role. User cannot be the Owner of the instance and cannot

be changed to the Owner role.

Edit the name or description of an Data Warehouse instance.

Data Warehouse instance owner

The user who created the Data Warehouse instance is automatically assigned

ownership of the instance. Ownership is not a formal role in the instance. NOTE: The Owner of a Data Warehouse instance cannot be

changed.

The Owner is also automatically assigned the Admin role. The Owner has all of

the permissions of the Admin role, as well as the permission to delete the Data

Warehouse instance.

Adding a User in Data Warehouse

Use the following steps to add a user to the Data Warehouse instance via the

gray pages.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #24

NOTE: The account creating the new user must be an Admin for

the Data Warehouse instance.

NOTE: Only users with existing GoodData platform accounts

may be added to the Data Warehouse instance.

NOTE: Users are added silently. No email is delivered to the

user.

Steps:

1. Get a list of users to add. For each user, you must acquire either the user

profile URI or the GoodData platform account identifier.

NOTE: A user’s profile URI is available when the user logs in

through the gray pages. You may also query for the list of users

in a project via API; the returned JSON includes user profile

identifiers for each user in the project.

2. Determine the role to apply to the user. See Data Warehouse User Roles.

3. Acquire the instance identifier for the Data Warehouse instance to which

you wish to add the user. See Prepare the JDBC connection string.

4. Visit the following gray page:

https://secure.gooddata.com/gdc/datawarehouse/instanc

es/[DW_ID]/users

5. The list of users and their roles is displayed. At the bottom of the page,

complete the form:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #25

Figure: Add new Data Warehouse user form

6. From the drop-down, select the Data Warehouse user role to assign to the

user. See Data Warehouse User Roles.

7. Enter the Profile URI for the user or the user’s GoodData platform identifier.

Do not enter both.

NOTE: When adding users by GoodData platform login

identifier, you should retrieve and store the profile URI, as

other API endpoints may not permit use of the login

identifier for entry.

8. To add the user, click Add user to the storage.

9. In rare cases, you may receive the following error message. If so, please

refresh the page opened to the GoodData Portal:

This server could not verify that you are authorized to

access the document requested. Either you supplied the

wrong credentials (e.g., bad password), or your browser

doesn't understand how to supply the credentials

required.Please see Authenticating to the GoodData API

for details.

10. The task is queued for execution in the platform. You may use the link in the

gray page to query the status of this task. Reload the page until you see the

following:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #26

Figure: User added to Data Warehouse instance

11. The user has been added to the instance.

12. Repeat these steps to add additional users to the Data Warehouse

instance.

Getting a List of Data Warehouse Users

To retrieve the list of users in your Data Warehouse instance, please visit the

following URL:

https://secure.gooddata.com/gdc/datawarehouse/instances/[DW_

ID]/users

NOTE: Admin users can see all users in the Data Warehouse

instance. More restricted users can retrieve only information on

their own accounts.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #27

Figure: List of Users in an Data Warehouse Instance

You may use the form at the bottom of the screen to add users to the Data

Warehouse instance. See Adding a User in Data Warehouse.

Profile identifiers:

profile - This URI provides access to the profile of an user.

In the following profile URI:

/gdc/account/profile/2374a6d5d45cca7a405d6c690

The profile identifier is the long string at the end of the profile URI. For example in

the following URL:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #28

2374a6d5d45cca7a405d6c690

To review details of the user or to make changes to the user account, click the self

link.

l

See Data Warehouse User Details.

Data Warehouse User Details

In the Data Warehouse User Details gray page, you can review the profile of the

selected user of your Data Warehouse instance.

l

In the list of Data Warehouse users in your instance, click the self link for

the user.

Figure: Data Warehouse User Details Page

l

To verify the username of the Data Warehouse user, click the profile

link.

l

See Change a User Role in the Data Warehouse Instance.

l

See Removing a User from Data Warehouse.

Changing a User Role in the Data Warehouse Instance

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #29

Use the following steps to change the role assigned to a user in your Data

Warehouse instance.

NOTE: The user applying the change must be an Admin in the

instance.

NOTE: The owner of the Data Warehouse instance cannot be

demoted from an Admin role or removed from the instance.

Steps:

1. In the list of Data Warehouse users in your instance, click the self link for the

user. The user details are displayed. See Data Warehouse User Details.

2. To verify the user’s identity, click the profile link.

3. From the role drop-down, select the new user role. See Data

Warehouse User Roles.

4. Click Update role.

5. The user’s role is updated. Verify the value for role.

Removing a User from Data Warehouse

Please complete the following steps to remove a selected user from your Data

Warehouse instance.

NOTE: The user applying the change must an Admin in the

instance.

NOTE: The owner of the instance cannot be demoted from an

Admin role or removed from the instance.

Steps:

1. In the list of Data Warehouse users in your instance, click the self link for the

user. The user details are displayed. See Data Warehouse User Details.

2. To verify the user’s identity, click the profile link.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #30

3. Click Delete.

4. The user is removed from the instance.

Resource Limitations

Memory limitations:

By default, individual customer queries are not allowed to allocate more than 10

GB of RAM. This limitation may vary depending on your license. For more

information, please contact GoodData Account Management.

Time limitations:

Queries running for longer than 2 hours to execute are terminated.

Parallel query limitations:

You may execute up to 4 queries in parallel per customer token.

l

l

l

If more than 4 queries are executed in parallel, additional queries are

queued for running at a later time when resources become available.

Resources are checked once per minute.

Each query is allocated memory from your shared memory pool, so you may

run into the memory limitations even if you are within your query count

limitation.

If queries are queued for more than two hours, they are terminated.

Tip: Where possible, GoodData recommends serializing

your queries and staggering jobs to prevent overlap.

Data Warehouse Backups

GoodData performs standard backups of the Vertica clusters hosting Data

Warehouse on a daily basis. In the unlikely event of service disruption or other

network-wide failure, data is restored to the previous backup.

NOTE: GoodData does not provide on-demand data recovery.

Users may manage their own backups through third-party tools.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

This backup policy matches the backup policy provided by the GoodData

platform.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Page #31

Agile Data Warehousing Service User Guide

Page #32

Data Warehouse Developer Guide

Data Warehouse System Architecture Overview

This section outlines Agile Data Warehousing Service integration with the

GoodData BI platform architecture and data flows, as well as the architecture of

Data Warehouse itself.

Data Warehouse and the GoodData Platform Data Flow

Figure: Data Warehouse and Platform Data Flows

Typically, the data flow is the following:

1. Source data may be uploaded by the customer to GoodData’s incoming

data storage via WebDAV, where it is collected by the BI automation layer,

which is typically custom CloudConnect ETL or Ruby scripts. Source data

may also be retrieved directly by the automation layer from the source

system’s API.

2. For more information on project-specific storage, see

https://developer.gooddata.com/article/project-specific-storage.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #33

3. ETL graphs may be created and published from CloudConnect Designer.

For more information on CloudConnect Designer, see Developer Tools.

4. Ruby scripts may be built and deployed using the GoodData Ruby SDK.

See http://sdk.gooddata.com/gooddata-ruby/.

NOTE: Developers may access Data Warehouse remotely

with SQL via the JDBC driver provided by GoodData.

Other JDBC drivers cannot be used with Data Warehouse.

For more information, see Download the JDBC Driver.

5. The automation layer imports the data into Data Warehouse and does any

necessary in-database processing using SQL. See Querying Data

Warehouse.

6. At this point, the data is ready for import into a presentation layer. Data is

extracted from Data Warehouse using SQL and is typically imported into a

GoodData project.

7. During extraction, any additional transformations may be performed in the

database using SQL or using CloudConnect Designer before the data is

uploaded to the presentation layer.

8. The data is available through the presentation layer for users.

For a simple example of a data flow implementation in CloudConnect, see

Working with Data Warehouse from CloudConnect.

Data Warehouse Architecture

Internally, Agile Data Warehousing Service consists of a number of shared or

dedicated clusters.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #34

Figure: Agile Data Warehousing Service architecture

Each Data Warehouse instance is spread across multiple nodes within either

shared clusters or a dedicated cluster.

NOTE: Data Warehouse instances are isolated; it is not

possible to run a query that references data stored in two

different Data Warehouse instances, even if both instances are

accessible to the same user.

Licensees of the GoodData platform receive an authorization token for creating

projects in the platform. After it has been enhanced, this token may also be used

to create an Data Warehouse instance, and it ensures that your Data Warehouse

instance is created on an appropriate cluster.

NOTE: Your project authorization token must be enabled to

create Data Warehouse instances. Please file a request with

GoodData Customer Support.

l

See Management Guide.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #35

Data Warehouse Technology

Agile Data Warehousing Service is based on the HP Vertica database. Each

node in an Data Warehouse cluster runs an instance of the Vertica database.

Data Warehouse supports the SQL:99 standard with several Vertica-specific

extensions.

l

l

For more information on the limitations against the SQL:99 standard or the

Vertica documentation, see Limitations.

For additional details, see Vertica documentation.

NOTE: You need GoodData’s Data Warehouse JDBC Driver to

connect to Data Warehouse. The Vertica JDBC driver cannot be

used to connect to Data Warehouse. See Download the JDBC

Driver.

Column Storage and Compression in Data Warehouse

Unlike standard row-based relational databases, the Data Warehouse stores data

using a columnar storage mechanism:

Figure: Row vs. Column storage

Columnar storage is particularly suitable for improve disk performance when

retrieving complex analytical queries or running in-database business

transformations. Queries can be answered by accessing only the columns

required by the query, which fits well with data warehousing and other readintensive use cases.

Moreover, the data in columns are compressed using various encoding and

compression mechanisms, which further improves the disk I/O. For example, run

length encoding can be applied to the “symbol” column:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #36

Figure: Columnar storage enhances disk storage and access

Data Warehouse Logical and Physical Model

Schema design elements such as tables and views are considered a database’s

logical database model. These objects provide information about available data

elements.

However, they do not define how the data is actually stored on the disk or how

they are distributed across the nodes within an Data Warehouse cluster. Those

structures are part of the physical data model.

The structures that define how table columns are organized on the disk and how

the data are distributed in the cluster are called projections:

Figure: Logical model vs. physical model

The following parameters of a physical data representation can be configured

using a projection:

l

l

l

Columns to be included and column encoding (run-length encoding, delta

encoding, etc)

Sequence of the table columns

Segmentation: The rows to keep on the same node and the projections to

be replicated instead of split

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #37

A single table may have multiple projections to support different query types. A

projection does not have to necessarily include all table columns. For example, in

a large transactional table, you may wish to have a projection sorted by

timestamp to support queries over the most recent data. You may also need a

projection sorted by customer to support a customer segmentation query, which

could be expensive. The table would have two additional projections to support

these use cases.

l

l

A projection is similar to a materialized view in traditional database. Like a

materialized view, a projection stores result sets on disk, instead of recomputing them with each query. As data is added or updated, these results

are automatically refreshed.

For more information on projections, see Physical Schema.

Data Warehouse users create SQL queries against the logical model. The

underlying engine automatically selects the appropriate projections.

l

As a feature of Vertica, Data Warehouse databases lack indexes. In place of

indexes, you use optimized projections to optimize queries.

Each logical table requires a physical model. This model can be described by a

projection for the table that includes all table columns. A projection with all table

columns is called a superprojection.

l

l

The CREATE TABLE command automatically creates a superprojection for

the new table.

Proprietary SQL extensions are available for configuring the parameters of

the default superprojection.

When building your Data Warehouse database, you can start with one

superprojection for each table. You may consider adding additional projections

from HP Vertica to improve performance of slow queries.

l

l

Additional projections can be defined using the CREATE PROJECTION

command.

In addition to specifying the columns in the projection, developers may

specify the compression, sort order, and the distribution of data across the

nodes of the cluster (segmentation) for the projection.

For more information about designing and optimizing the data model:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

See Database Schema Design.

l

See Performance Tips.

l

Page #38

For additional details, see the Physical Schema section of the Vertica

documentation.

Intended Usage for Data Warehouse

Agile Data Warehousing Service is a relational database designed for data

warehousing use cases. Although Data Warehouse may be accessed from any

JDBC-capable client application and supports a superset of the SQL:99

specification, Data Warehouse is expected to be used as a data warehouse and

persistent staging environment.

NOTE: It is not recommended to use Data Warehouse as an

OLTP database with a large number of parallel queries and data

updates with a very short expected response time.

Working with Data Warehouse from CloudConnect

The CloudConnect Designer installation package includes the GoodData JDBC

driver, which is needed to connect to Agile Data Warehousing Service.

l

l

l

CloudConnect Designer is a downloadable application for building ETL

graphs and logical data models for your GoodData projects. For more

information on CloudConnect Designer, see Developer Tools.

To get started building your first GoodData project, see Developer's Getting

Started Tutorial.

If you have already installed it, please upgrade CloudConnect Designer to

the most recent version of CloudConnect Designer. The driver is provided

as part of the upgrade package.

Creating a Connection between CloudConnect and Data

Warehouse

Please complete the following to create a connection between CloudConnect and

Data Warehouse.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #39

Steps:

1. Open your project’s ETL graph or create a new one.

2. To create a new database connection in CloudConnect using the built-in

Data Warehouse driver, secondary-click Connections in the Project

Outline. Then select Connections > Create DB Connection....

NOTE: Do not create your connection from the File menu.

3. Select a <custom> database connection.

4. You may wish to use project parameters for the username and password

and to pass them into the connection at runtime. See Loading Data through

CloudConnect to Data Warehouse.

5. The connection requires a connection string. Remember to insert the

identifier for the Data Warehouse instance (DW_ID) as part of the

connection string. See Prepare the JDBC connection string.

6. Your CloudConnect connection should look similar to the following:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #40

Figure: Data Warehouse connection from CloudConnect

7. Click Validate connection to test it.

8. If the connection succeeds, save it.

You have configured your graph in CloudConnect to connect to the specified

Data Warehouse instance. This connection must be referenced in each

component instance that interacts with Data Warehouse.

Reusing the connection:

The connection to Data Warehouse is local to the graph in which you created it. It

must be copied into other graphs to be used in other projects, if project

parameters have been created for it.

l

By default, each database component instance creates a new connection.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #41

NOTE: To avoid repeating yourself, the connection settings

should reference CloudConnect variables rather than using

hard-coded constants. See Project Parameters for Data

Warehouse.

NOTE: To create a connection that can be reused by multiple

connections, you may deselect the Thread-safe button in the

Advanced tab of the Connection Settings dialog. However, this

configuration should be avoided unless truly necessary for

operations such as retrieving an auto-incremented key. Do not

turn off the thread safety for connections used by components

expected to issue long-running queries. Transactions running

for more than 2 hours will be terminated.

Loading Data through CloudConnect to Data

Warehouse

Using the DBExecute component, you specify the CloudConnect connection to

use and the COPY LOCAL commands to execute against your Data Warehouse

instance.

l

If you have not done so already, create a connection in CloudConnect

Designer so that the application can interact with Data Warehouse. See

Connecting to Data Warehouse from CloudConnect.

NOTE: You must use the COPY LOCAL command to load data

into Data Warehouse. For more information on the command,

supported parameters, and its Data Warehouse-specific

implementation, see Loading Data into Data Warehouse.

Data Warehouse does not support upsert operations and does not require unique

record identifiers at load time. For this reason, you should utilize staging tables to

load your data and then to perform a merge. See Merge data using staging tables.

Tip: In CloudConnect Designer, you should build the load and

merge operations separately. You can validate that the loading

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #42

operation has successfully completed before kicking off the

merge operation.

Project Parameters for Data Warehouse

When you are creating CloudConnect projects, you should parameterize values

that may change based on the target GoodData project. For example, if the same

basic ETL process is to be used for multiple projects from multiple source

systems, you should turn access parameters such as username, password, and

access URL into CloudConnect parameters.

In your project, you should define the following project parameters:

Parameter

Description

DSS_USER

The Data Warehouse user identifier to use to connect

DSS_PASSWORD

This parameter can be used at runtime to apply a password

to connect to Data Warehouse.

DSS_JDBC_URL

This parameter should be used to define the JDBC URL to

access the Data Warehouse project.

Creating Tables in Data Warehouse from CloudConnect

Before you load data into staging tables, you must create the tables for staging

and production.

Tip: For organization purposes, it is a recommended practice

that you create your table initialization ETL in a separate graph

in CloudConnect Designer.

In this example, staging tables with the “in_” prefix are created for a dataset called

opportunities.

Steps:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #43

1. In the graph, add a DBExecute component. Edit the component.

2. Properties:

1. DB Connection: select the connection you created

2. SQL query: see below.

3. Print statements: true

4. Transaction set: All statements

3. For the SQL query, you must create the staging tables. The example below

creates the table for in_opportunities. Note the use of the in_ prefix for the

staging environment:

CREATE TABLE IF NOT EXISTS in_opportunities (

_oid IDENTITY PRIMARY KEY,

id VARCHAR(32),

name VARCHAR(255) NOT NULL,

created TIMESTAMP NOT NULL,

closed TIMESTAMP,

stage VARCHAR(32) NOT NULL,

is_closed BOOLEAN NOT NULL,

is_won BOOLEAN NOT NULL,

amount DECIMAL(20,2),

last_modified TIMESTAMP

)

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #44

4. Your DBExecute component should look like the following:

Figure: Creating staging tables

5. Save your graph.

Loading Data to Data Warehouse Staging Tables through

CloudConnect

Using a separate DbExecute component, you can use the COPY LOCAL

command to populate your staging tables with data from a locally referenced file.

l

l

For more information on the COPY LOCAL command, see Loading Data

into Data Warehouse.

For more information on creating the staging tables, see Creating Tables in

Data Warehouse from CloudConnect.

In the following example, you create a DbExecute instance to load the staging

table for in_opportunities from the local file opportunities.csv.

Steps:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #45

1. In the graph, add a DBExecute component. Edit the component.

2. Properties:

1. DB Connection: select the connection you created

2. SQL query: see below.

3. Print statements: true

4. Transaction set: All statements

3. For the SQL query, you must specify at least two commands in the following

order.

4. Before copying into the table, the TRUNCATE command is used to ensure

the staging table is empty.

5. The COPY LOCAL commands to copy from the local source file

(opportunities.csv in this case) to the staging table you created.

6. Commands are separated by a semicolon. Your SQL might look like the

following:

TRUNCATE in_opportunities;

COPY in_opportunities

(id, name, created, closed, stage, is_closed, is_won,

amount, last_modified)

FROM LOCAL '${DATA_SOURCE_DIR}/opportunities.csv'

SKIP 1

ABORT ON ERROR

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #46

7. Your DBExecute component should look like the following:

Figure: Loading staging tables

8. Save your graph.

Merging Data from Data Warehouse Staging Tables to

Production

After data has been staged in Data Warehouse, you can use the following basic

steps to merge into your production environment. In this case, you create a

DBExecute instance to MERGE INTO records from the staging tables.

Steps:

1. In the graph, add a DBExecute component. Edit the component.

2. Properties:

1. DB Connection: select the connection you created

2. SQL query: see below.

3. Print statements: true

4. Transaction set: All statements

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #47

3. For the SQL query, you must specify the MERGE INTO commands to merge

from staging to production:

MERGE INTO opportunities t

USING in_opportunities s

ON s.id = t.id

WHEN MATCHED THEN

UPDATE SET name = s.name,

created = s.created,

closed = s.closed,

stage = s.stage,

is_closed = s.is_closed,

is_won = s.is_won,

amount = s.amount

WHEN NOT MATCHED THEN

INSERT

(id, name, created, closed, stage, is_closed, is_

won, amount)

VALUES

(s.id, s.name, s.created, s.closed, s.stage,

s.is_closed, s.is_won, s.amount)

4. Your DBExecute component should look like the following:

Figure: Merging into Production

Save your graph.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #48

After this graph is executed, you may truncate the staging tables.

Exporting Data from Data Warehouse using CloudConnect

To export data from Data Warehouse using CloudConnect, you deploy the

DBInputTable component to extract data from your production tables. This

component can be connected to a Writer component to store the data in its new

destination. Typically, this component is the GD Dataset Writer component, which

writes the data to a specified GoodData project.

Steps:

1. Add the DBInputTable component. Edit it.

2. Properties:

1. DB Connection: select the connection you created

2. SQL query: see below.

3. Data policy: Strict is recommended.

4. Print statements: false

3. For the SQL query, you must specify the SELECT command to retrieve the

data from the production table. In the SQL Query Editor, the fields in the

query must be mapped to the output metadata fields for consumption by the

next component in the graph:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #49

Figure: SQL Query for exporting data tables

Tip: You should specify manually each field in the table

that you are extracting. If the table schema changes in the

future, then the ETL process continues to function, as long

as the change does not include modifications to the

source fields. Avoid using SELECT *.

4. Click OK.

5. The data that is extracted is mapped to the metadata of the DBInputTable

component.

6. To write to a GoodData project, add a GD Dataset Writer component.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #50

7. Create an edge between the two components.

8. In the GD Dataset Writer component, specify the GoodData project

identifier, the target dataset, and the field mappings from DBInputTable

metadata to dataset fields.

9. Save your graph.

Your graph should look like the following:

Figure: Final export graph

Connecting to Data Warehouse from SQL Client Tools

This section describes how to connect to Data Warehouse from SQL clients using

JDBC.

NOTE: CloudConnect Designer is pre-packaged with the JDBC

driver and automatically receives any updates if the driver is

updated. Downloading and installing it in CloudConnect

Designer is not necessary. See Working with Data

Warehouse from CloudConnect.

There are many free and commercial SQL client tools. Feel free to use your

preferred tool, as the set up is consistent.

Steps:

These are the basic steps:

1. Download the Data Warehouse JDBC driver. See Download the JDBC

Driver.

2. Add the Data Warehouse JDBC driver into your tool. For more information,

please consult the production documentation provided with your SQL client

tool.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #51

3. Build your Data Warehouse instance’s JDBC connection string. See

Prepare the JDBC connection string.

4. Use the Data Warehouse driver and JDBC connection string to set up a

connection.

5. You may be also asked for the driver class name:

com.gooddata.dss.jdbc.driver.DssDriver

l

For additional details, see Access Data Warehouse From SQuirrel SQL.

Download the JDBC Driver

Connection to Data Warehouse is supported only by using the JDBC driver

provided by GoodData.

This driver enables Data Warehouse connectivity from:

l

CloudConnect ETL Designer

NOTE: The driver is pre-installed in supporting versions of

CloudConnect Designer.

l

Java-based visual SQL client tools

l

Java programming environment, such as JRuby

NOTE: For third-party SQL client tools, the driver is available at

the following URL:

https://developer.gooddata.com/downloads/dss/

ads-driver.zip

To download the JDBC driver, click Developer Tools.

Data Warehouse Driver Version

If you have having issues with your Data Warehouse connection, you may be

asked by GoodData Customer Support to provide the version number of the

JDBC driver that you are using for Data Warehouse.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #52

NOTE: You cannot use a Vertica JDBC driver for Data

Warehouse. You must use the Data Warehouse driver provided

by GoodData.

To acquire the Data Warehouse driver version:

l

l

l

l

An updated version of CloudConnect Designer always contains the latest

version of the Data Warehouse driver. CloudConnect enables automatic

updates of the application.

If you have downloaded the driver from the Developer Portal, you can locate

the driver version through one of the following methods:

When the ZIP file is unzipped, the driver version number is embedded in the

filename.

If you no longer have the ZIP file, the version number can be retrieved

through standard method calls on the Data Warehouse driver. Use:

Driver.getMajorVersion() & Driver.getMinorVersion()

DatabaseMetaData.getDriverMinorVersion() &

DatabaseMetaData.getDriverMajorVersion()

DatabaseMetaData.getDriverVersion()

For more information, please visit GoodData Customer Support.

Prepare the JDBC connection string

Also known as the JDBC URL, the JDBC connection string instructs Java-based

database tools how to connect to a remote database.

For Data Warehouse, the format of the JDBC connection string is the following:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #53

jdbc:gdc:datawarehouse://secure.gooddata.com/gdc/datawarehous

e/instances/[DW_ID]

To acquire your DW_ID:

1. Review your Data Warehouse instances at the following URL:

https://secure.gooddata.com/gdc/datawarehouse/instances

2. For the Data Warehouse instance to use, click the self link.

3. Copy the last part of the URL:

Figure: Data Warehouse Instance ID

Suppose your Data Warehouse instance URL was the following:

https://secure.gooddata.com/gdc/datawarehouse/instances/

Your JDBC connection string is the following:

jdbc:gdc:datawarehouse://secure.gooddata.com/gdc/datawarehous

e/instances/

This connection string must be applied in CloudConnect Designer or the

database tool of your choice.

l

Working with Data Warehouse from CloudConnect

l

Access Data Warehouse From SQuirrel SQL

Access Data Warehouse From SQuirrel SQL

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #54

SQuirrel SQL is a powerful, open-source JDBC database interface. For more

information, see http://squirrel-sql.sourceforge.net/.

Steps:

To connect from SQuirrel SQL to your instance, please complete the following

steps.

1. If you have not done so already, download and install the JDBC driver. See

Download the JDBC Driver.

2. Download and install SQuirrelSQL. See http://squirrelsql.sourceforge.net/#installation.

3. Launch the application. Select File > New Session Properties.

4. To add the JDBC driver, select Drivers > New Driver.

5. Properties:

1. Name: Enter something like GoodData Data Warehouse JDBC.

2. Example URL: Use the following:

jdbc:gdc:datawarehouse://secure.gooddata.com/gdc/

datawarehouse/instances/[Data Warehouse_ID]

3. Website URL: (optional) You may enter:

https://developer.gooddata.com

4. Click the Extra Class Path tab. Click Add. Navigate your local hard

drive to locate the JDBC driver you downloaded.

5. For the Class Name, enter the following value:

com.gooddata.dss.jdbc.driver.DssDriver

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #55

6. The configuration for your new driver should look like the following:

Figure: New JDBC driver for SQuirreLSQL

7. Click OK.

8. A success message indicates that the driver has been properly installed

and registered with the application.

9. In the left navigation bar, click Drivers. Select the GoodData JDBC driver

from the list.

10. Create a new database alias for the connection. From the menu, select

Aliases > Connect....

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #56

11. Click the Plus icon.

12. Properties:

1. Name: Suggest GoodData Data Warehouse JDBC.

2. Driver: Select the GoodData JDBC driver that you just created.

3. URL: This value should be modified to be a direct reference to your

Data Warehouse instance. The final value of the URL should be the

internal identifier of the Data Warehouse instance. See Reviewing

Your Data Warehouse Instances.

4. User Name and Password: Specify the GoodData platform account to

use to connect to the instance.

13. Your alias should look like the following:

Figure: SQuirreLSQL alias for Data Warehouse

14. Click Test to validate the connection.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #57

15. If the connection works, click OK.

16. You are now able to connect to Data Warehouse.

Connecting to Data Warehouse from Java

You can connect the Data Warehouse from Java using the Data Warehouse

JDBC Driver. See Download the JDBC Driver.

NOTE: Data Warehouse is designed as a service for building

data warehousing solutions, and it is not expected to be used as

an OLTP database. To provide Data Warehouse data to your

end users, you should push it into either a GoodData project to

deliver analytical dashboards or to your operational database,

which should be optimized to be a backend of your user-facing

application code.

For more information on GoodData projects, see Project Hierarchy.

Connecting to Data Warehouse from JRuby

You may use the following set of instructions to connect to Data Warehouse using

Ruby.

Install JRuby

The driver for connecting to Data Warehouse is available only as a JDBC driver

at this time. No native library in Ruby is available. As a result, you must first install

JRuby.

Steps:

1. Install Ruby.

2. The easiest method is to install using the Ruby Version Manager (RVM).

3. If you don’t have the RVM installed, please visit https://rvm.io/rvm/install.

4. After you have RVM installed, run the following command to install the

Java-based implementation of Ruby onto your local machine:

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #58

$ rvm install jruby

5. To switch to the installed version of JRuby:

$ rvm use jruby

6. Optionally, to make JRuby your default Ruby environment, use the following

command:

$rvm --default use jruby

l

(Optional) To restore the original Ruby system as your default, you

may use:

$rvm use system

7. To verify your installed version of Ruby, execute the following command:

$ ruby -v

8. The output should look like the following:

jruby 1.7.9 (1.9.3p392) 2013-12-06 87b108a on Java HotSpot

(TM) 64-Bit Server VM 1.6.0_65-b14-462-11M4609 [darwin-x86_

64]

Access Data Warehouse using the Sequel library

The Sequel library provides relatively low-level access with nice abstractions and

a friendly programming interface, active development, and JDBC support. It has

been tested for use with JRuby for purposes of integrating with Data Warehouse.

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

l

l

Page #59

For more information on the Sequel library, see

http://sequel.jeremyevans.net/.

For quick start documentation, see

http://sequel.jeremyevans.net/rdoc/files/doc/cheat_sheet_rdoc.html.

For a complete reference guide, see http://sequel.jeremyevans.net/rdoc/.

Installing database connectivity to Ruby

Use the following command:

$ gem install sequel

The Ruby database abstraction layer (Sequel) is installed.

Installing the Data Warehouse JRuby support

To install Data Warehouse support for JRuby, you must clone a Git repository and

perform the following installation. Please execute the following steps in the order

listed below.

Steps:

$ git clone https://github.com/gooddata/gooddata-dss-ruby

$ cd jdbc-dss

$ rvm use jruby

$ rake install

Example Ruby code for Data Warehouse

The following Ruby script provides a simple example for how to connecting to

Data Warehouse using JRuby and then to execute a simple query using Sequel:

#!/usr/bin/env ruby

require 'rubygems'

require 'sequel'

require 'jdbc/dss'

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #60

Jdbc::Data Warehouse.load_driver

Java.com.gooddata.dss.jdbc.driver.DssDriver

# replace with your Data Warehouse instance:

dss_jdbc_url =

'jdbc:gdc:datawarehouse://secure.gooddata.com/gdc/datawarehou

se/instances/[DW_ID]'

# replace with your GoodData platform login name:

username = '[email protected]'

# replace with your GoodData platform password:

password = 'MyPassword'

# example query

Sequel.connect dss_jdbc_url, :username => username, :password

=> password do |conn|

conn.run "CREATE TABLE IF NOT EXISTS my_first_table (id

INT, value VARCHAR(255))"

conn.run "INSERT INTO my_first_table (id, value) VALUES (1,

'one')"

conn.run "INSERT INTO my_first_table (id, value) VALUES (2,

'two')"

conn.fetch "SELECT * FROM my_first_table WHERE id < ?", 3

do |row|

puts row

end

end

NOTE: Data Warehouse is designed as a service for building

data warehousing solutions and it is not expected to be used as

an OLTP database. If you are looking for a way of exposing the

data in Data Warehouse to your end users, consider pushing

necessary data from Data Warehouse into either a GoodData

project to deliver analytical dashboards or to your operational

database that is optimized to be a backend of your end user

facing application code.

Database Schema Design

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #61

Data Warehouse makes a clean distinction between the logical data model,

which defines the tables and columns, and the physical data model, which

identifies how the data is organized using the columnar storage and distributed

across the cluster nodes.

Figure: Agile Data Warehousing Service architecture

l

For more information on differences between the LDM and the PDM, see

Data Warehouse Logical and Physical Model.

Logical Schema Design - tables and views

The logical database schema can be created with a standard CREATE TABLE

command. Similarly, views can be created using the CREATE VIEW command.

An Data Warehouse view is just a persisted SELECT statement. There is no

significant performance difference between querying a derived result set inlined

as a sub-select versus persisted as view.

l

Materialized views are not supported.

Primary and Foreign Keys

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #62

Data Warehouse does not enforce the uniqueness of primary keys. However, a

non-unique value in a primary key column causes errors in the following

situations:

l

l

During the load, if data is loaded into a table that has a pre-joined projection

In join queries at query time, if there is not exactly one dimension row that

matches each foreign key value.

Tip: To ensure the uniqueness of your primary keys, use

staging tables and the MERGE command (see Merging Data

Using Staging Tables). If you want to store a version history in

your table, the identifier of the source entity should be neither

declared as a PRIMARY KEY nor referenced by a FOREIGN

KEY column.

Similarly, the referential integrity declared by a foreign key constraint is not

enforced during the data load, unless there is a pre-join projection. However, it

may result in a constraint validation error later if a join query is processed or a

new pre-join projection is created.

Altering Logical Schema

Data Warehouse supports table modification via the standard ALTER TABLE

command.

The underlying columnar storage enables adding or removing columns very

quickly, even for very large tables.

NOTE: New columns are not automatically propagated to

associated views, even if a view is created with the wildcard (*).

To add new columns to a view, the view must be recreated

using the CREATE OR REPLACE VIEW command.

Limitations:

l

Maximum table columns: 1600 columns

l

You cannot add columns to a temporary table

Physical Schema Design - projections

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

Page #63

A projection defines how the records specified by logical tables are actually

stored and distributed across the cluster nodes.

The following parameters of a physical data representation can be configured

using a projection:

l

l

l

Columns to be included and column encoding (run-length encoding, delta

encoding, etc)

Column ordering

Segmentation: The rows to keep on the same node and the projections to

be replicated instead of split

NOTE: Having multiple projections for the same table impedes

data updates. You should retain only the necessary projections

in your production design.

When a new table is created using the CREATE TABLE command, a new

superprojection is created automatically.

l

For more information on the CREATE TABLE extensions that can configure

the initial superprojection, see Configuring the Initial Superprojection with

CREATE TABLE command.

Additional projections can be created using the CREATE PROJECTION

command.

l

l

See Creating a New Projection with CREATE PROJECTION command.

For more information on replacing existing projections, see Changing

Physical Schema.

Columns Encoding and Compression

Data Warehouse column storage space can be reduced by applying encoding

and compression techniques. Available encoding methods include:

l

run-length encoding (sorted repeating values replaced with the value and a

number of occurrences)

Copyright © GoodData Corporation 2007 - 2015

All Rights Reserved.

Agile Data Warehousing Service User Guide

l

dictionary

l

various delta encodings

Page #64

By default, the AUTO encoding is used on column values. This method applies

LZO compression to CHAR/VARCHAR, BOOLEAN, BINARY/VARBINARY, and

FLOAT columns.

l

For INTEGER, DATE/TIME/TIMESTAMP, and INTERVAL type columns,

Data Warehouse uses a compression scheme based on the delta between

consecutive column values.

Tip: For sorted, many value columns such as primary keys, the

AUTO encoding is usually the best choice. For repeating sorted

low cardinality columns, run-length encoding (RLE) may be the

right choice.

For more detailed information on individual encoding types, see

https://my.vertica.com/docs/6.1.x/HTML/index.htm#9273.htm.

Columns Sort Order

The sort order optimizes for queries based on the query predicate, especially

WHERE clauses, GROUP BY or ORDER BY.

See also the Choose Projection Sorting Criteria performance tip.

Segmentation

In a typical Data Warehouse instance, data may be distributed across three or

more nodes of a shared or dedicated cluster. By default, Data Warehouse and the

underlying Vertica database can manage automatically this distribution. However,

in a high-performance database, developers may require better control over how

data is distributed.

In a Vertica database, segmentation controls how data from a table may be

distributed across nodes of a cluster. When a table or projection is created, you

can specify segmentation parameters to define how data is distributed.

l

If segmentation is not specified explicitly, data is segmented by a hash of