P system model optimisation by means of evolutionary based search

P System Model Optimisation by Means of Evolutionary Based Search Algorithms Carlos García-Martínez Dept. Computing and Numerical Analysis 14071, University of Córdoba Córdoba, Spain [email protected] Claudio Lima, Jamie Twycross Natalio Krasnogor Centre for Plant Integrative Biology University of Nottingham Sutton Bonington Campus, Nottingham, LE12 5RD ASAP Research Group School of Computer Science Jubilee Campus, Nottingham, NG8 1BB [email protected] [email protected] [email protected] ABSTRACT 1. INTRODUCTION Executable Biology, also called Algorithmic Systems Biology, uses rigorous concepts from computer science and mathematics to build computational models of biological entities. P systems are emerging as one of the key modelling frameworks within Executable Biology. In this paper, we address the continuous backward problem: given a P system model structure and a target phenotype (i.e. an intended biological behaviour), one is tasked with finding the (near) optimal parameters for the model that would make the P system model produce the target behaviour as closely as possible. We test several real-valued parameter optimisation algorithms on this problem. More specifically, using four different test cases of increasing complexity, we perform experiments with four evolutionary algorithms, and one variable neighbourhood search method combining three other evolutionary algorithms. The results show that, when there are few parameters to optimise, a genetic and two differential evolution based algorithms are robust optimisers attaining the best results. However, when the number of parameters increases, the variable neighbourhood search approach performs better. Living cells are the product of an exquisite interplay between a multitude of processes that span many spatial and temporal scales. These processes involved a vast number of small molecules, macromolecules (e.g. RNA, DNA, proteins), as well as cell membranes and organelles [1]. The availability of advanced modelling methodologies is essential for the successful practice of systems and synthetic [2, 3] biology. Computational modelling serves several purposes: it enables the essential features of biological entities and phenomena to be captured; understanding behind those features and their interactions to be disambiguated; and, in some cases, it allows systems/synthetic biologists to move away from qualitative descriptions towards quantitative predictions [19]. One of the most applied modelling techniques for cellular systems has been that of ordinary differential equations [4]. However, since this technique assumes that the dynamics of the cellular processes are continuous and deterministic, its application is inappropriate in many cases. Currently, discrete and stochastic approaches, such as those employed in executable biology [11] and algorithmic systems biology [21], are receiving a great deal of attention because they overcome the aforementioned assumptions. In particular, P systems [22] is a formalism able to integrate stochastic and discrete modelling into a computational framework [7, 8, 25]. P systems abstract from the structure and operation of living cells, representing cell-like membrane structures with compartments, molecules with multisets of objects, and molecular interactions with sets of rules. The temporal evolution of a stochastic P system is determined by the application of algorithms based on Gillespie’s Stochastic Simulation Algorithm (SSA) [13] to the multi-compartmental structure of P systems models [20, 24]. However, as with any modelling approach, P system model building can be a complex endeavour, especially for large models, involving the specification of the model structure and also of the model parameters. For such models, the space of all possible model topologies and parameter values is vast, and sophisticated optimisation methods are required to generate models that fit the behaviour of the target system. Evolutionary Algorithms (EAs) [10] are optimisation procedures inspired by the concepts of biological evolution and Darwinian natural selection. Nowadays, they are widely applied on many different real-world problems. In particular, Categories and Subject Descriptors I.2 [Artificial Intelligence]: Problem Solving, Control Methods, and Search; J.3 [Life and Medical Sciences]: Biology and genetics General Terms Experimentation Keywords Executable biology, P systems, Evolutionary algorithms, Realvalued parameter optimisation Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full citation on the first page. To copy otherwise, to republish, to post on servers or to redistribute to lists, requires prior specific permission and/or a fee. GECCO’10, July 7–11, 2010, Portland, Oregon, USA. Copyright 2010 ACM 978-1-4503-0072-8/10/07 ...$10.00. 187 latter case, multisets o1 outside membrane l, and multisets o2 inside membrane l, are replaced by multisets o1 and o2 , respectively. Parameter c is a constant associated with each rule, which determines the propensity according to Gillespie’s theory of stochastic kinetics [13]. Rewriting rules are applied according to a multicompartment extension [24] of Gillespie’s Stochastic Simulation Algorithm (SSA) [13]. certain EA approaches are regarded as the current state-ofthe-art methods to deal with real-valued parameter optimisation problems. Evolution Strategies, Differential Evolution (DE), and Real-coded Genetic Algorithms (GAs) are, amongst others, three of the most important EA families being applied to such problems. In this paper, we analyse the application of EAs on the backward problem: the optimisation of the real-valued parameters of a P system model so that the model behaviour matches a predefined target behaviour. This target behaviour is specified by time series that record some biological measurement such as cell optical density, gene expression levels, molecular concentrations, and so on. We have selected four different EA approaches and a hybrid algorithm combining several inner EAs within the variable neighbourhood search algorithm (VNS) [18]. We perform experiments on four test cases of increasing complexity and compare the results of the different algorithms for a limited number of fitness evaluations. To our knowledge, this is the first study in evolving parameters for P systems. The work in [8] represents the state of the art for evolving structures for P systems, which includes one of the EAs analysed herein (see Section 3.4). For the state of the art of optimisation of systems biology models based on other than P systems please refer to [9]. The structure of the paper is as follows. The next section introduces P systems and their modular assembly. In Section 3, we describe the analysed algorithms. In Section 4, we describe the empirical framework used to investigate the application of EAs to the problem of optimising P system parameters. In Section 5, we present and discuss the results. Finally, conclusions are drawn in Section 6. 2. Cellular behaviour is usually the result of the networked interactions of many molecular species that follow specific connectivity motifs [2]. Thus, biological motifs and modularity are one of the cornerstones of a principled approach to modelling in systems and synthetic biology [3]. A biological module is a separable discrete entity that defines a particular biological function [16]. A P system module is a set of rewriting rules (1) in which some of the objects, kinetic constants, or labels, might be variables. This increases reusability because large models can be constructed by combining common modules instantiated with specific values. Formally, a module M is specified as M (V, C, L) where V are the object variables, which can be instantiated with specific objects, C are variables for the kinetic constants, and L are variables for the labels of the compartments appearing in the rules. There are two major advantages when using modules to automatically design biological cellular models: 1) they help produce biologically valid and plausible models because modules are fundamental building blocks obtained from biological knowledge; 2) they promote model diversity because, while the number of modules is limited, they can be instantiated with different values for their variables, and therefore combinatorially combined to provide a huge variety of candidate models. As an example, we introduce one of the P system modules used in our test cases. Details on the remainder of the modules can be found in [3, 5, 6, 8]. Constitutive or Unregulated expression: This module describes how a gene, gX, is first transcribed into its mRNA, rX (in this case without any transcriptional regulatory factor), and then, how the mRNA is translated into the corresponding protein pX. Cell machinery can also degrade the mRNA and protein. These processes take place in compartment l according to the kinetic constants c1 , . . . , c4 . P SYSTEMS We use a variant of P systems (in their original form introduced by Paun [22]) called stochastic P systems, which are designed for specifying and simulating cellular systems [20]. A stochastic P system is a tuple Π = (O, L, μ, Ml1 , . . . , Mln , Rl1 , . . . , Rln ) where: • O is a finite alphabet of molecules. • L = {l1 , . . . , ln } is a finite set of labels representing compartment types. • μ is a membrane structure that contains n ≥ 1 membranes defining hierarchically arranged compartments. There is a one-to-one correspondence between the membranes and the labels in L, which determine their types. • Mli , 1 ≤ i ≤ n, is the initial configuration of membrane i, which consists of a multiset of objects over O placed inside the compartment of membrane with label li . • Rli = {r1li , . . . , rklil }, 1 ≤ i ≤ n, is a finite set of i rewriting rules associated with the compartment with label li . Their form is as follows: o1 [o2 ]l − → o1 [o2 ]l c = U nReg({X}, {c1 , c2 , c3 , c4 }, {l})9 8 c1 > > r : [gX] − → [gX + rX] l l > > 1 > > c2 = < r2 : [rX]l −→ [rX + pX]l = c3 > > > > r3 : [rX]l −→ [ ]l > > c4 ; : r4 : [pX]l −→ [ ]l As shown in the module definition, X is a variable that can be instantiated with a specific identifier, and the kinetic constants c1 , . . . , c4 can be assigned specific values representing different transcription, translation and degradation rates. 3. EVOLUTIONARY ALGORITHMS FOR REAL-VALUED PARAMETER OPTIMISATION (1) o1 , o2 , o1 , o2 where are multisets of objects over O and l ∈ L is a label. These rewriting rules transform objects inside their associated membrane, and transport objects either inside or outside the membrane. In the EAs [10] are algorithms that mimic aspects of biological evolution and constitute a powerful tool for solving complex high-dimensional problems. They have been successfully applied to many real-world problems. EAs rely on a population 188 of individuals that represent potential solutions to a given problem. These individuals undergo probabilistic operations such as mutation, recombination, and selection, to evolve into better solutions. The aptitude of the individuals is usually measured according to a given fitness function, which is defined according to the problem in hand. Solution encoding is an important aspect of EAs. Solutions (or individuals) generally have to be represented according to the nature of the problem being solved. In particular, when the problem involves the optimisation of several real-valued parameters, the individuals of the population are usually represented as a vector of real numbers (c1 , c2 , . . . , c ) where ci is the ith parameter of the problem and is the total number of parameters. There exist a large variety of EA approaches for realvalued parameter optimisation problems. We briefly describe four representative examples, and a VNS [18] combining three EAs, that we use to address the continuous backward problem of optimising the kinetic constants of P system models to match a prespecified target behaviour. these five schemes to generate each solution. Like other EAs, DE also applies recombination and selection to evolve the population of solutions. For more details about these operators see [26]. 3.3 Opposition-based Differential Evolution Opposition-based differential evolution (ODE) [23] is a modification of DE inspired by opposition-based learning which aims to accelerate convergence speed. More concretely, ODE explores opposite solutions to those present in the current population and keeps the best alternative. Given a point x = (c1 , c2 , . . . , c ), where ci ∈ [ai , bi ], the opposite ˘ = (˘ point x c1 , c˘2 , . . . , c˘ ) is calculated as c˘i = ai + bi − ci . While the opposite population is always inspected in population initialization, during the evolutionary search the process of opposition-based generation jumping is only performed with a given probability Jr . Further details can be found in [23]. 3.4 A Real-coded Genetic Algorithm This method is a steady-state genetic algorithm (GA) for real-value optimization similar to that employed in [8]. The GA uses multi-parent crossover as the sole genetic operator as follows: First, randomly select M > 2 individuals x1 , x2 , . . . , xM from the parameter population with xi = (ci1 , ci2 , . . . , ci ) for i = 1, 2, . . . , M . Then, randomly generate M coefficients αi satisfying: (1) αi ∈ [a, b] where a and b are control parameters of the algorithm such that a < 0 P and b > 1; (2) M α = 1. Finally, generate a new vector i i=1 of constants xnew PMas a non-convex linear combination of xi using xnew = i=1 αi · xi . If the fitness of xnew is better than that of the worst individual in the current population then this individual is replaced by xnew . 3.1 Covariance Matrix Adaptation Evolution Strategy CMA-ES [15] is a derandomised evolution strategy that adapts the complete covariance matrix C of the normal mutation search distribution. The algorithm adapts the covariance matrix based on the evolution path p of previous successful directions. In this way, C is changed to make steps in the direction p more likely. The version of CMAES used in this paper combines weighted recombination and rank-μ-update of the covariance matrix [15]. Therefore, the λ new candidate solutions are generated according to “ ” 2 (t+1) (t) xk ∼ N xW , σ (t) C(t) , k = 1, . . . , λ, (2) 3.5 Variable Neighbourhood Search with Evolutionary Components where N (m, C) stands for a normally distributed random vector with mean m and covariance matrix C. The recombination point is given by the weighted mean of the selected Pμ (t) (t) individuals: x i=1 wi xi:λ , where wi > 0 for all PWμ = i = 1 . . . μ and i=1 wi = 1. VNS with evolutionary components (VNS-ECs) [12] is an example of a novel methodology to design hybrid algorithms which applies specialised EAs as inner operators [17]. VNSECs combines the following three EAs: 1. CMA-ES (Section 3.1): used to generate an initial VNS solution. The applied parameter values are the ones suggested in the C code available from the webpage of the author1 (see Table 3). 2. Micro Cross-generational elitist selection, Heterogeneous recombination, and Cataclysmic mutation [12]: used to perturb the current best solution in order to escape local optima. Following the idea in the original VNS, the intensity of the perturbation performed (Nk ) is increased when the previous improvement does not outperform the current best solution, and decreased otherwise. 3. Continuous Local EA [12]: used to locally optimise the current VNS solution so that a local optimum is accurately reached. Importantly, Continuous Local EA employs a specific replacement strategy and mating scheme to gather and exploit information from the search space, allowing it to enhance its efficiency and efficacy. 3.2 Differential Evolution Differential evolution (DE) [26] is a population-based method that uses differences between current solutions to bias the search. Essentially, a new parameter vector is generated by adding the weighted difference between two (or more) solutions to a third vector. Two typical schemes used are DE/rand/1 and DE/rand/2: xi = xr1 + F · (xr2 − xr3 ) (3) xi = xr1 + F · (xr2 + xr3 − xr4 − xr5 ) (4) where xi ∈ R is the ith generated solution, r1, r2, r3, r4, r5 ∈ [0, N ] are randomly generated and mutually exclusive solution indexes, and F > 0. Two other well-know schemes, DE/best/1 and DE/best/2, can be obtained by simply replacing xr1 with the current best solution xbest . Combining these different schemes, DE/rand-to-best/1 was also proposed: xi = xr1 + λ · (xbest − xi ) + F · (xr1 − xr2 ) (5) Steps (2) and (3) are repeated until a halting condition (in our case a fixed number of iterations) is fulfilled. where λ and F control the balance between exploitation and exploration. In this paper, DE randomly chooses one of 1 189 http://www.lri.fr/∼hansen/cmaes_inmatlab.html Table 1: Target models Test case Test case 1 / Test case 2 13 kinetic constants Test case 3 18 kinetic constants Test case 4 38 kinetic constants Target model Π = (m1 , m2 , m3 )+Fixed modules in Weiss library [3, 5, 6, 8] m1 = P const({X = LuxR}, {c1 = 0.1}) m2 = P lux({X = CI}, {c1 = 1, c2 = 1, c3 = 10000)}) m3 = P luxP R({X = GF P }, {c1 = 1, c2 = 1, c3 = 1, c4 = 1, c5 = 5, c6 = 10−7 , c7 = 5, c8 = 10−7 , c9 = 4}) simulation time: 170 sec. interval: 10 sec. Π = (m1 , m2 , m3 , m4 ) m1 = UnReg({X = gene1}, {c1 = 4.5, c2 = 1, c3 = 0.03, c4 = 0.001}) m2 = P osReg({X = gene1, Y = gene2}, {c1 = 1, c2 = 100, c3 = 5, c4 = 1, c5 = 0.15, c6 = 0.6}) m3 = P osReg({X = gene1, Y = gene3}, {c1 = 1, c2 = 10, c3 = 8, c4 = 1, c5 = 0.15, c6 = 0.6}) m4 = N egReg({X = gene2, Y = gene3}, {c1 = 1, c2 = 0.1}) simulation time: 118 min. interval: 1 min. Π = (m1 , m2 , m3 , m4 , m5 ) m1 = UnReg({X = LuxR}, {c1 = 0.15, c2 = 0.0004, c3 = 0.03, c4 = 0.001}) m2 = P luxR({X = LacI}, {c1 = 0.1, c2 = 0.175, c3 = 1, c4 = 0.0063, c5 = 1, c6 = 0.0063, c7 = 0.01875, c8 = 1, c9 = 1, c10 = 0.001, c11 = 0.004, c12 = 0.03, c13 = 0.001}) m3 = P luxR({X = CI}, {c1 = 0.1, c2 = 0.175, c3 = 1, c4 = 0.0063, c5 = 1, c6 = 0.0063, c7 = 0.01875, c8 = 1, c9 = 1, c10 = 0.1, c11 = 0.004, c12 = 0.03, c13 = 0.001}) m4 = P R({X = LacI}, {c1 = 0.15, c2 = 0.004, c3 = 0.03, c4 = 0.001, c5 = 0.000166, c6 = 0.002, c7 = 0.1666, c8 = 0.002, c9 = 0.0083, c10 = 0.0002}) m5 = P lac({X = F P }, {c1 = 0.15, c2 = 0.004, c3 = 0.03, c4 = 0.001, c5 = 0.000166, c6 = 0.01, c7 = 11.6245, c8 = 0.06}) simulation time: 3600 sec. interval: 36 sec. Plac::gFP=1 PR::gLacI=1 Plux::gCI=1 Plux::gLacI=1 gLuxR = 1 Ps3OC6ext = 5 of any object does not appear in the table, it is set to the default value 0. Notice that, in some test cases, some rules appear more than once because of the particular instantiations of their modules. These repeated rules must be removed and therefore, the number of kinetic constants to be optimised is reduced. The first two test cases consist of a gene regulatory network of three genes that simulate a pulse generator. The target output is the number of molecules of GFP for four different initial states (source). These first and second test cases only differ in the parameter ranges defined for the kinetic constants (Table 2). While for the first case, a narrow search is performed based on biological knowledge about the magnitude of particular kinetic constants, for the second case, most parameter ranges span several orders of magnitude. These two test cases allow us to investigate the importance of having specific knowledge about the parameters in order to find good parameter configurations. The third test case represents a more general network where three genes are able to produce a pulse in the expression of a particular gene. The target output is the number of molecules of the corresponding three types of proteins. The fourth case is the most complex one and represents a network with five genes that behave as a bandwidth detector. The target output is the number of molecules of pLuxR2, pCI, pLacI, and pFP. Table 3 gives the parameter settings for each algorithm (please, refer to the cited publications for descriptions and discussions of each parameter). In the case of VNS-ECs, we performed experiments with two configurations. VNSECs-1 applies the parameter values suggested in the original publication [12], whereas VNS-ECs-2 employs the following changes suggested by preliminary experiments (see Table 3 for details): certain parameters of Continuous Local EA have been modified; execution of CMA-ES at the beginning of the run is replaced by sampling a random solution because CMA-ES was generally not able to rapidly converge fast to one solution; the initial population of Continuous Local EA is not evaluated to save some resources, since this component is not biased by the fitness function to select the individuals performing crossover, its performance is not adversely affected. 4. Initial values GFP=1 LuxR=1 LacI=1 TetR=1 CI=1 source=0/1/2/16 gene1 = 1 gene2 = 1 gene3 = 1 EXPERIMENTS 4.1 Test Cases In this section, we describe the empirical framework used to study the application of the EA-based optimisation methods described in the previous section to the backward problem of optimising P system kinetic constants. Four test cases of increasing complexity have been selected to perform experiments. While the first two test cases were designed for this paper, the more complex problems have been taken from [8], where a detailed analysis of several objective functions for P system structure and parameter optimisation is presented. These test cases model bacterial systems and employ a membrane structure consisting of a single membrane. Therefore, it is sufficient to indicate the vector of modules that compose a rule set Rl , i.e., Π = (O, {l}, [], Ml , Rl ) is represented by (m1 , . . . , mn ). Table 1 gives details on the target models for the four test cases. The target models were simulated in silico to generate the target time series for each test case. Then, the obtained time series were used to attempt to reverse engineer the models that produced them. When the initial value 4.2 Running Conditions Candidate solutions for all the algorithms are encoded as follows: each candidate solution consists of a real-valued vector (c1 , . . . , c ) where ci is the kinetic constant associated with rule ri . Table 2 shows the ranges and scale types of the kinetic constants optimised for each test case. If the scale is linear, the constant can take any value within the given range. If the scale is logarithmic, the constant can take any power of ten whose exponent is within the given range. In the latter case, less knowledge is available and we are more interested in the order of magnitude of the corresponding constant, e.g., the second test case concerns the same P system model as the first test case with, however, the scale 190 Table 3: Parameter setting for the algorithms Table 2: Range and precision of the kinetic constants of the rules for each test case Test case Test case 1 / Test case 2 Test case 3 Module PConst Plux PluxPR UnReg PosReg NegReg Test case 4 Plac PR PluxR UnReg Constant c1 c1 ,c2 c3 c1 to c8 c9 c1 c2 to c4 c1 c2 c3 c4 to c6 c1 c2 c1 c2 c3 c4 c5 c6 c7 c8 c1 ,c7 c2 ,c9 c3 c4 c5 c6 ,c8 c10 c1 ,c2 c3 ,c5 ,c8 ,c9 c4 ,c6 c7 c10 ,c13 c11 c12 c1 c2 c3 c4 Scale linear linear / log linear linear / log linear linear linear linear log linear linear linear log linear linear linear log linear linear linear linear linear linear linear log linear linear linear linear linear linear linear log linear linear linear linear linear log Algorithm CMA-ES Range (0,10) (0,10) / (-10,2) (0,10) (0,10) / (-10,2) (0,10) (0,10) (0,2) (0,10) (-3,3) (0,10) (0,2) (0,10) (-3,3) (0.1,0.3) (0.001,0.01) (0.01,0.05) (-4,-1) (0.0001,0.0003) (0,0.02) (9,12) (0.01,0.08) (0.1,0.3) (0.001,0.01) (0.01,0.05) (-4,-1) (0.0001,0.0003) (0,0.005) (0,0.0005) (0,0.2) (0,2) (0,0.01) (0,0.02) (-4,1) (0.001,0.006) (0.01,0.05) (0.1,0.3) (0.001,0.01) (0.01,0.05) (-4,-1) DE ODE GA VNS Continuous Local EA 1 / 2 versions muCHC Value 10 20 rangei /3 20 0.9 0.5 20 0.9 0.5 0.5 0.3 20 4 -0.5 1.5 20 1e-2 / 1 100 / 50 100 0.5 / 2 0.5 20 0.5 during the optimisation and 1000 for the final solution), and an average model output is obtained for each time series T ˆ1 , X ˆ2 , . . . , X ˆ N ), where X ˆ j = (ˆ (X x1j , x ˆ2j , . . . , x ˆM j ) . In other words, each time series consists of M data points, ∀j = 1, . . . , N . The output time series are then compared to the corresponding target series (X1 , X2 , . . . , XN ), where Xj = T (x1j , x2j , . . . , xM j ) , by means of the randomly weighted sum method used in [8]. This method was shown, in contrast to the root mean square error, to guide search algorithms to reliable final solutions even when different time series range over significantly different scales. All algorithms are run on each test case for 1000 fitness evaluations. The reason for this short evaluation budget is twofold. In the first place, the evaluation of a solution for the tackled problems requires a simulation process that is very time-consuming, i.e. solution evaluation is the bottleneck of the search process. Therefore, we are particularly interested in the capability of the algorithms to reach highquality solutions in a small number of fitness evaluations. Secondly, our future goal is to design an approach to optimise both the structure as well as the constants of P system models, which will involve running an algorithm many times to optimise many different model candidates. In this case, each run of an algorithm will need to consume the minimum resources possible for the global approach to work in an acceptable amount of time. For each algorithm, we calculate the mean, standard deviation, and median, of the best fitness values found over 50 independent runs (i.e. of the fittest candidate solution found by each run). We also record the fitness value of the best solution found over these 50 runs. The same statistics are also obtained for the root mean square error (RMSE) between the fittest solution and the target solution in each of the 50 independent runs. The RMSE is a better metric to use in evaluating overall algorithm performance than the fitness values. Because fitness is calculated using the randomly weighted sum method mentioned above, different fitness values can be assigned to identical solutions, which is not the case with the RMSE. Therefore, conclusions based solely on the fitness values of the final solutions could be misleading. of most of the kinetic constants set to logarithmic instead of linear. This makes the problem more difficult because the interval of final possible values is larger (e.g. c2 of Plux is optimised in (0, 10) for the first test case, but in (10−10 , 102 ) for the second test case). Sometimes, the algorithms will produce candidate solutions containing out-of-bounds parameters. These are dealt with as follows: GA, DE, and ODE repair an out-of-bounds solution by assigning a correctly-bounded random value to any out-of-bounds parameters. VNS-ECs versions apply the mechanism described in [12]. CMA-ES resamples each solution until all parameters are correctly bounded. To avoid significant computational effort in this task, a maximum number of resamples is set to 103 . If an invalid solution is still obtained after this number of resamples, the fitness of the candidate solution is set to a very high fitness value (in case of fitness minimization), where solutions closer to the feasible space are less penalized than solutions further away. Specifically, the penalized fitness is given by: f (x) = f (xrepaired ) + 109 · x − xrepaired , Parameter μ λ (0) σi |P op| CR F |P op| CR F λ Jr |P op| M a b kmax mating threshold maxIters |P op| Initial α alpha bits/variable factorEvals (6) where xrepaired is the closest feasible solution to x. The fitness value of a given candidate solution is computed as follows: firstly, the corresponding candidate model Π is constructed by setting the kinetic constants to the values proposed by the solution. The Multicompartment Gillespie SSA [24] is applied to run Π a given number of times (20 191 Table 4: Results of the algorithms on the four test cases Test case 1 2 3 4 5. Method 1. DE 2. VNS-ECs-2 3. GA 4. ODE 5. CMA-ES 6. VNS-ECs-1 1. GA 2. DE 3. ODE 4. CMA-ES 5. VNS-ECs-2 6. VNS-ECs-1 1. DE 2. ODE 3. GA 4. VNS-ECs-2 5. VNS-ECs-1 6. CMA-ES 1. VNS-ECs-1 2. VNS-ECs-2 3. CMA-ES 4. ODE 5. DE 6. GA Fitness Mean 316.05 326.58 342.36 340.89 365.89 398.5 373.36 453.73 498.05 595.49 656.27 792.98 258.38 284.23 274.11 304.58 298.98 411.76 633.8 743.38 754.38 870.98 889.56 1139.87 St. Dev. 70.69 91.54 82.4 82.51 81.27 57.96 97.99 120.08 165.13 170.75 380.04 405.36 35.47 53.34 97.79 65.12 104.73 88.16 210.73 189.73 125.71 230.29 260.27 237.36 Median. 319.64 332.96 355.48 341.35 376.77 408.4 379.55 427.17 474.82 571.82 528.94 671.4 250.62 277.17 261.21 296.07 282.42 411.68 582.07 714.81 749.43 899.73 881.1 1128.6 Best 193.25 117.99 105.07 184.31 204.5 223.65 154.36 240.52 199.86 371.23 224.33 322.44 195.27 195.58 146.58 197.3 163.09 265.78 245.7 387.46 430.25 307.86 367.1 576.68 RESULTS AND DISCUSSION RMSE Mean 23.36 24.08 24.96 25.17 27.79 29.97 27.41 34.13 36.49 44.55 47.71 58.52 3.51 3.64 3.83 3.95 4.09 4.78 8.87 10.15 10.97 12.37 12.97 18.57 Ranking St. Dev. 5.76 6.89 6.34 6.32 7.43 4.93 7.29 8.53 12.46 12.78 26.56 28.29 0.33 0.41 1.41 0.75 1.21 0.87 3.42 3.03 2.21 3.88 4.66 5.61 Median. 22.6 23.52 26.27 24.68 27.9 30.82 28.08 32.11 35.57 42.02 38.1 50.31 3.5 3.64 3.48 3.91 3.71 4.64 8.24 9.36 10.41 12.87 12.91 18.61 Best 12.92 9.05 7.88 14.39 14.29 16.36 11.8 16.21 14.37 26.38 17.16 23.73 2.66 2.87 2.63 2.72 2.51 3.52 3.46 6.35 6.96 4.57 4.73 8.36 {1,2,3,4} {1,2,3,4} {1,2,3,4} {1,2,3,4,5} {4,5} {6} {1} {2,3} {2,3,5} {4,5} {3,4,5} {6} {1,2,3} {1,2,3,5} {1,2,3,5} {4,5} {2,3,4,5} {6} {1} {2} {3,4} {3,4,5} {4,5} {6} Finally, results are significantly different on the fourth test case, in which there is a larger number of parameters. VNSECs versions are the best algorithms with statistical different performance according to the Mann-Whitney U test. CMA-ES, ODE, and DE seem to perform similarly and the GA performs the least well. In general, we see that GA, ODE, and DE perform better than the other algorithms for problems with few parameters. Among them, GA performs the best when biological knowledge is reduced. On the other hand, VNS-ECs variants perform better for the problem with larger number of parameters. Next, we examine why this is the case. Figure 1 shows the mean convergence graphs of each algorithm on the test cases over 50 independent runs. As said previously and due to computational constraints, to produce this graph we calculated the average of the best fitness found so far using 20 simulation runs, instead of 1000 runs. Therefore, some differences between this figure and the results presented in Table 4 can be expected. Nevertheless, Figure 1 shows that the algorithms present similar convergence velocities with two important differences: 1) when the problem has a larger number of parameters to optimise, e.g. test case 4, GA, DE, and ODE reduce their convergence speeds because evolving populations of individuals consume many resources; 2) when the problem involves fewer parameters, the GA, DE, and ODE algorithms are not affected by resources consumption as much. However, in this case, VNS-ECs versions are affected by the implicit noise present in these problems, because they focus the search process on just one solution. Interestingly, we also note that in the fourth test case, CMA-ES initially seems to struggle to generate feasible solutions, and is therefore not able to improve the best randomly generated solution for some time. This might be related to the fact that, for test case 4, many parameter ranges are of different orders of magnitude, which is not particularly helpful when using CMA-ES [14]. Finally, Figure 2 depicts the target time series of each test case together with the average output of the best solutions for each algorithm. In the first two test cases, the level of Table 4 shows the results for each of the algorithms on the different test cases, with best results highlighted in bold. The algorithms are ranked based on the RMSE average results, as opposed to the single overall best solution obtained, since we are interested in the ability of each algorithm to robustly and consistently provide good solutions. We applied the Mann-Whitney U test with p-value=0.05 to compare and determine the statistical significance of the RMSE average results between algorithms on each test case. Based on this test, for each algorithm, the final column of Table 4 indicates the algorithms for which no statistically-significant difference was found. In the first test case, we can see that most of the algorithms (DE, VNS-ECs-2, GA, and ODE) perform similarly because the Mann-Whitney U test did not find significant differences between their RMSE results. CMA-ES and VNSECs-1 clearly performed worse than the other algorithms. For the second test case, the Mann-Whitney U test finds more differences between the performance of the algorithms. GA is the best algorithm and VNS-ECs-1 is the worse one according to the statistical analysis. The rest of the algorithms obtain similar results between themselves although DE seems to perform slightly better. Since the first and second test cases are the same problem but there is less biological knowledge about the parameter ranges in the latter, we can compare the results of the algorithms on these two problems. We can see that all the algorithms obtain worse results when biological knowledge is reduced. However, the performance of GA is less severely affected than the others. In the third test case, many algorithms obtain similar performance, as in the first test case. Among the algorithms, DE, ODE, and GA perform best. In this test case, the scale of all the parameters but one is linear and subsequently, their search intervals are not too large. The fact that the conditions of test cases 1 and 3 are similar may explain why the results are also similar on both test cases. However, the results of VNS-ECs-2 are now considerably worse than the best performing algorithms. 192 Test case 1 Test case 2 800 700 Fitness Average Test case 3 2000 CMA-ES DE ODE GA VNS-ECs-1 VNS-ECs-2 600 1500 500 Test case 4 700 CMA-ES DE ODE GA VNS-ECs-1 VNS-ECs-2 2500 CMA-ES DE ODE GA VNS-ECs-1 VNS-ECs-2 600 500 CMA-ES DE ODE GA VNS-ECs-1 VNS-ECs-2 2000 1500 1000 400 400 1000 500 300 300 200 200 400 600 1000 0 800 200 Evaluations 400 600 200 1000 800 200 400 Evaluations 600 500 1000 800 200 400 Evaluations 600 800 1000 Evaluations Figure 1: Convergence graphs of the algorithms on each test case Test case 4 Test case 1 120 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 8 6 40 20 4000 3500 3000 2500 2000 1500 0 4000 3500 3000 2500 4000 0 4000 3500 3000 2500 0 2000 2 0 1500 4 180 160 140 120 100 80 60 40 0 6 20 0 0 8 40 1000 20 10 3500 60 12 3000 80 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 14 pFP 100 40 20 2000 120 500 60 16 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 140 pLacI 80 1500 160 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 100 2500 Test case 2 120 500 180 160 140 120 80 100 60 40 0 20 0 0 1000 0 2000 2 60 1500 20 80 1000 4 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 100 1000 40 0 No. of proteinGFP 140 10 500 60 160 12 pCI 80 14 500 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 100 pLuxR2 No. of proteinGFP 120 Test case 3 2 0 protein3 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 15 10 5 120 100 80 60 40 20 0 60 0 40 120 100 80 60 40 20 0 0 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 8 6 4 20 10 20 0 20 16 14 12 10 120 Target CMA-ES VNS-ECs-2 DE GA ODE VNS-ECs-1 30 25 100 40 protein2 protein1 50 20 18 80 60 Figure 2: Average of the simulated output of the solutions of the algorithms on each test case ter than the average results (Table 4), it is expected that a more accurate fit to this time series is obtained in some runs. Finally, considering test case 4, we can see that almost all the algorithms produce solutions that seem to match the levels of pLacI and pCI well, but have difficulties matching the levels of the other two molecules (except pFP for CMAES). It can also been seen that the good performance of the VNS-ECs variants when tackling this test case is due to a better fit of the pLacI level, which has a larger scale than the other time series where the algorithms present differences (pLuxR2 and pFP). In particular, though CMA-ES fits the pFP level time series better than the VNS-ECs variants, it does not match the pLacI one as well as the VNS-ECs variants and as a consequence obtains worse results in Table 4. GFP achieved from different initial values are presented in the same graph. In the other cases, different graphs are used for the levels of each molecules. Comparing the graphs for the first two test cases, we see that all the algorithms experience a noticeable degradation in their performance when less knowledge about the problem is available (test case 2). Among the algorithms, the VNS-ECs variants are the most affected. Regarding test case 3, all the algorithms present similar results, accurately matching the level of protein1. There is a slight difference in matching the level of protein2, and the level of protein3 is rarely well matched. This fact indicates that the algorithms usually find difficulties matching this time series. However, since the best results among the 50 runs of the algorithms are usually significantly bet- 193 6. CONCLUSIONS AND FUTURE WORK In this paper, we have analysed the application of EAs to the backward problem of optimising the kinetic constants of P systems in order to match a target model. Four different EAs and a VNS method combining three inner EAs have been applied to four different test cases of increasing complexity. Limited computational resources (1000 fitness evaluations) have been imposed because of the increased time consumption when evaluating candidate solutions. Based on our test cases, the results show that: 1) when the number of kinetic constants being optimised is small, GA, DE, and ODE become robust optimisers attaining the best results; and 2) when the number of parameters to be optimised increases, the VNS-ECs variants obtain better results. To our knowledge, this is the first study that analyses the application of EAs to the continuous backward problem. In the near future, we intend to analyse the way the algorithms try to match the different target time series throughout their execution. Moreover, we are interested in the ability of the studied algorithms to provide diverse solutions for the backward problem, that is, alternative parameter sets that match the target time series equally well. We also intend to use the insights we have gained in this paper as a basis for developing efficient algorithms for optimising model structure. 7. [8] [9] [10] [11] [12] [13] [14] [15] ACKNOWLEDGMENTS Authors acknowledge F.J. Romero-Campero for his helpful discussions and support. C. Garc´ıa-Mart´ınez acknowledge Research Projects TIN2008-05854 and P08-TIC-4173. N. Krasnogor, J. Twycross, and C. Lima acknowledge the UK EPSRC project EP/E017215/1 and the BBSRC projects BB/F01855X/1 and BB/D0196131. [16] [17] 8. ADDITIONAL AUTHORS Additional authors: M. Lozano (Dept. of Computer Science and Artificial Intelligence, CITIC-UGR, University of Granada, Spain, email: [email protected]). [18] 9. [19] REFERENCES [1] B. Alberts, D. Bray, J. Lewis, M. Raff, K. Roberts, and J. Watson. Molecular Biology of the Cell. Garland Science, 4th edition, 2002. [2] U. Alon. An Introduction to Systems Biology. Mathematical and Computation Biology Series. Chapman & Hall/CRC, 2006. [3] E. Andreianantoandro, S. Basu, D. Karig, and R. Weiss. Synthetic biology: new engineering rules for an emerging discipline. Mol. Syst. Biology, 2(28):E1–E14, 2006. [4] M. Atkinson, M. Savageau, J. Myers, and A. Ninfa. Development of genetic circuitry exhibiting toggle switch or ocillatory behavior in Escherichia coli. Cell, 113:597–607, 2003. [5] S. Basu, Y. Gerchman, C. Collins, F. Arnold, and R. Weiss. A synthetic multicellular system from programmed pattern formation. Nat., 434:1130–1134, 2005. [6] S. Basu, R. Mehreja, S. Thiberge, M. Chen, and R. Weiss. Spatiotemporal control of gene expression with pulse-generating networks. In Proc. Natl. Acad. Sci. USA, volume 101, pages 6355–6360, 2004. [7] F. Bernardini, M. Gheorghe, N. Krasnogor, R. Muniyandi, M. Perez-Jim´enez, and F. Romero-Campero. On P systems as a modelling tool for biological systems. In R. Freund, G. Paun, and G. R. A. Salomaa, editors, Workshop on [20] [21] [22] [23] [24] [25] [26] 194 Membrane Computing, LNCS 3850, pages 114–133. Springer, 2005. H. Cao, F. Romero-Campero, S. Heeb, M. Camara, and N. Krasnogor. Evolving cell models for systems and synthetic biology. Systems and Synthetic Biology, 4(1):55–84, 2010. A. Dr¨ ager, M. Kronfeld, M. Ziller, J. Supper, H. Planatscher, J. Magnus, M. Oldiges, O. Kohlbacher, and A. Zell. Modeling metabolic networks in C. glutamicum: a comparison of rate laws in combination with various parameter optimization strategies. BMC Syst. Biology, 3(5):1–24, 2009. A. Eiben and J. Smith. Introduction to Evolutionary Computing. Springer-Verlag, 2003. J. Fisher and T. Henzinger. Executable cell biology. Nat. Biotech., 25:1239–1249, 2007. C. Garc´ıa-Mart´ınez and M. Lozano. A continuous variable neighbourhood search based on specialised EAs: Application to the noiseless BBO-benchmark 2009. In Genetic Evolutionary Computation Conf (GECCO), pages 2287–2294, 2009. D. Gillespie. Stochastic simulation of chemical kinetics. Annu. Rev. Phys. Chem., 58:35–55, 2007. N. Hansen. The CMA evolution strategy: A tutorial. Online: http://www.lri.fr/ hansen/cmatutorial.pdf, 2009. N. Hansen and S. Kern. Evaluating the CMA evolution strategy on multimodal test functions. In X. Yao, E. Burke, J. Lozano, J. Smith, J. Merelo-Guerv´ os, J. Bullinaria, J. Rowe, P. Tiˇ no, A. Kab´ an, and H.-P. Schwefel, editors, Proc. of the Int. Conf. on Parallel Problem Solving from Nature, LNCS 3242, pages 282–291. Springer Berlin, Heidelberg, 2004. L. Hartwell, J. Hopfield, S. Leibler, and A. Murray. From molecular to modular cell biology. Nat., Impacts, 402:47–52, 1999. M. Lozano and C. Garc´ıa-Mart´ınez. Hybrid metaheuristics with evolutionary algorithms specializing in intensification and diversification: Overview and progress report. Comput. Oper. Res., 37:481–497, 2010. N. Mladenovic and P. Hansen. Variable neighborhood search. Comput. Oper. Res., 24:1097–1100, 1997. A. Moya, N. Krasnogor, J. Pereto, and A. Latorre. Goethe’s dream. Challenges and opportunities for synthetic biology. EMBO reports, 10, S1:28–32, 2009. M. P´erez-Jim´enez and F. Romero-Campero. P systems, a new computational modelling tool for systems biology. Trans. Comput Syst. Biology, VI:176–197, 2006. C. Priami. Algorithmic systems biology. Commun. ACM, 52(5):80–88, 2009. G. P˘ aun. Membrane Computing: An Introduction. Springer-Verlag, Berlin, 2002. S. Rahnamayan, H. Tizhoosh, and M. Salama. Opposition-based differential evolution. IEEE Trans. Evol. Comput., 12(1):64–79, 2008. F. Romero-Campero, J. Twycross, M. Camara, M. Bennett, M. Gheorghe, and N. Krasnogor. Modular assembly of cell systems biology models using P systems. Int. J. Fdn. Comput. Sci., 20:427–442, 2009. F. Romero-Campero, J. Twycross, H. Cao, J. Blakes, and N. Krasnogor. A multiscale modelling framework based on P systems. In Proceedings of the Workshop on Membrane Computing (WMC9), LNCS 5391, pages 63–77. Springer, 2009. R. Storn and K. Price. Differential evolution–a simple and efficient heuristic for global optimization over continuous spaces. J. Global Optim., 11(4):341–359, 1997.

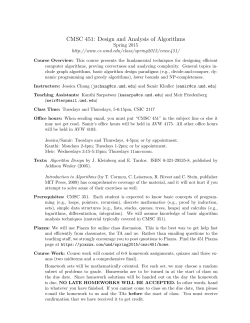

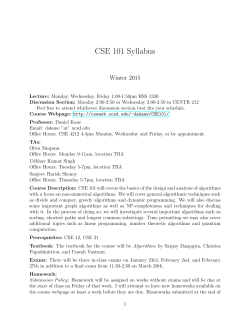

© Copyright 2026