Learning stochastic timed automata from sample executions Andr´ e de Matos Pedro

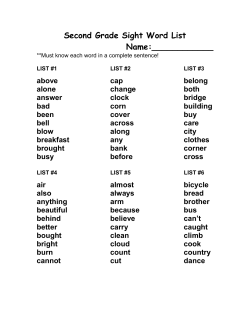

Learning stochastic timed automata from sample executions Andr´e de Matos Pedro1 , Paul Andrew Crocker2 and Sim˜ao Melo de Sousa3? 1 2 University of Minho, Braga, Portugal, [email protected], IT - Instituto de Telecomunica¸co ˜es, University of Beira Interior, Covilh˜ a, Portugal, [email protected] 3 LIACC - Laborat´ orio de Inteligˆencia Artificial e Ciˆencia de Computadores, University of Beira Interior, Covilh˜ a, Portugal, [email protected] Abstract. Generalized semi-Markov processes are an important class of stochastic systems which are generated by stochastic timed automata. In this paper we present a novel methodology to learn this type of stochastic timed automata from sample executions of a stochastic discrete event system. Apart from its theoretical interest for machine learning area, our algorithm can be used for quantitative analysis and verification in the context of model checking. We demonstrate that the proposed learning algorithm, in the limit, correctly identifies the generalized semi-Markov process given a structurally complete sample. This paper also presents a Matlab toolbox for our algorithm and a case study of the analysis for a multi-processor system scheduler with uncertainty in task duration. 1 Introduction Stochastic processes are commonly used as an approach to describe and make a quantitative evaluation of more abstract models which may be described by a high-level specification. When a model is evaluated we can use it for the design phase and subsequently make an implementation. However, even if a model is validated this does not imply that the implementation is in conformity with the model. This is normally due to bugs in the implementation, wrong interpretation of the model, or possibly, wrong approximations in the construction of the stochastic model. Unfortunately techniques for discovering these errors such as testing are unlikely to be sufficient due to the difficulty of achieving a complete or total coverage. This paper is concerned with how these models can be derived from sample executions provided by an implementation in order to verify them. There are several learning algorithms for learning probabilistic and stochastic languages [3,13,20], including a learning algorithm for continuous-time Markov processes (CTMP) [19], but there is no algorithm in the case of processes that do not hold ? This work was supported CCO/101904/2008). in part by the FCT CANTE project (Refa PTPC/EIA- the Markov property such as generalized semi-Markov processes (GSMP) [10]. Thus, the learning of stochastic timed automata covered in this paper falls in the category of language identification [2,17,1]. For most of the methods in this category, the identified stochastic languages are inferred from a set of sample executions, i.e., these samples are a particular multi-set of the original language to identify, and the inference has as target the identification of the language in the limit, i.e., if the number of samples tends towards infinity then the learned language will converge to the original language that generated the sample [11]. Learning of stochastic languages essentially follows a common method, firstly establishing an equivalent relation between the states, then constructing a prefix tree from samples provided by the original stochastic language, and lastly describing an algorithm for the merge of equivalent states which is called state merge. In this paper, we address the problem of learning generalized semi-Markov processes that are the most known extensive stochastic processes when lifetimes can be governed by any continuous probabilistic distributions [7]. From classical Markov processes, exponential probability distributions are not sufficient to model the lifetime of a product such as an electronic component [16] or even model a computer process [12]. The use of generalized semi-Markov processes may cover a wider set of problems however they are more complex and analytically intractable. 1.1 Contribution of the paper The learning algorithm we shall present infers a GSMP model from a given set of trajectories and therefore must be capable of inferring the model by running the deployed system in a test phase and of learning trajectories according to the observed distributions. The learned stochastic timed automaton that is generated by a GSMP is a model that can be used by existing statistical model-checkers [15,23,22,5] and by the existing performance evaluation tools for further analysis and thereby ultimately helping to find bugs in the post-implementation phase. Learning algorithm for GSMP may also potentially be used to perform automatic verification for stochastic discrete event systems. In addition we also establish the correctness of our algorithm. We ensure that, in the limit, when the samples grow infinitely the learned model converges to the original model. Thus, a set of conditions like the definition of inclusion of a prefix tree in a GSMP have to be ensured as well as the definition of probability measure of paths. 1.2 Structure of the paper In section 2 some preliminary definitions are given in order to establish the learning algorithm detailed in section 3. In section 4 we demonstrate the correctness of our algorithm. In section 5, the tool and a practical application are presented. In the final section 6 we give our conclusions and discuss directions for further work. 2 Preliminaries In order to formulate the next notations we describe the concept of finite path that is established by a prefix, σ≤τ = {s0 , he1 , t1 i , s1 , he2 , t2 i , ..., sk , hek+1 , tk+1 i} based on the infinite sequence σ = {s0 , he1 , t1 i , s1 , he2 , t2 i , · · · } of a GSMP, where sk is a state, ek is an event, tk is the holding time of the event ek , and Pk+1 τ = i=1 ti is the path duration upon k. A set of paths with prefix p is denoted by P ath(p), where p shall be σ≤τ . Some notation will now be introduced to describe the structure of the algorithm. The definitions are based on symbol (’k’) that symbolizes a path with respect to an element of a particular set (of states X , of events E or of holding times G) and brackets (’[’;’]’) a sequential identification, as follows: σkX [s, i] is the ith state of the state sequence that begins in state s, σkE [s, i] is the ith event of the event sequence that begins in state s, σkG [s, i] is the ith holding time of the event sequence (σkE [s, i]) that begin in s state, η(σkE [s, i]) = σkX [s, i − 1] is a function that returns the state associated to an event ei , ε(σkX [s, i]) = σkE [s, i + 1] is a function that given a state of a path returns its associated event, and δ(σkE [s, i]) = σkG [s, i] is a function that given an event σkE [s, i] returns its holding time σkG [s, i]. A sequence of events he1 , e2 , e3 , . . . , ek i produced by the prefix tree that accepts the prefix σ≤τ is denoted by σ≤τ kE . A prefix tree (denoted P t) that has an acceptor P ath(σ≤τ ) (a set of paths with prefix σ≤τ ), is a tree P t(P ath(σ≤τ )) = (F, Q, ρ, %, δ) where F is a set of leaf nodes of the prefix tree (i.e., F = P ath(σ≤τ kE )), Q is the set of nodes of the prefix tree composed by the sequence of events from P ath(σ≤τ kE ) (i.e., Q represents all accepted sequences in the prefix tree), ρ : Q → [0, 1] is the function that associate the expectation value for each node n ∈ Q, % : Q → R≥1 ×...×R≥1 is the function that associate each node with a n-tuple of clock values, and δ : Q → Q ∪ ⊥ is the transition function which have the following definition, δ(s, λ) = s where λ is the empty string and s is the reference point (where all samples are measured), δ(s, e) =⊥ if δ(s, e) is not defined, and δ(s, xe) = δ(δ(s, x), e), where x ∈ Q and e ∈ E, δ(s, xe) =⊥ if δ(s, x) =⊥ or δ(δ(s, x), e) is undefined. A generalized semi-Markov process is a stochastic process {X(t)} with state space X, generated by a stochastic timed automaton (sta, for short), sta = (X , E, Γ , p, p0 , G) where X is a finite state space, E is a finite event set, Γ (x ) is a set of feasible or enabled events, defined for every x ∈ X , with Γ (x ) ⊆ E, p(x0 ; x, e0 ) is a state transition probability (x0 to x given event e0 ) defined for every x, x0 ∈ X and e0 ∈ E such that ∀e0 ∈ / Γ (x )p(x 0 ; x , e 0 ) = 0 , p0 (x) is the probability mass function (pmf ) P r[X0 = x], x ∈ X of the initial state X0 , and finally G = {Gi : i ∈ E} is a stochastic clock structure where Gi is a cumulative distribution function (cdf ) for each event i ∈ E. The probability measure µ for a cylinder set composed by a prefix σ≤τ , C (σ≤τ , hEk , Yk∗ i , Xk , ..., Xn−1 , hEn , Yn∗ i , Xn ) accordingly to [23], can be defined recursively as µ(C(σ≤τ , hEk , Yk∗ i , Xk , ..., hEn , Yn∗ i , Xn )) = Pe (s0 ; σ≤τ ) · He (t; ·, σ≤τ ) · ∗ µ(C(σ≤τ ⊕ (he, ti , s0 ) , Ek+1 , Yk+1 , Xk+1 , ..., Xn−1 , hEn , Yn∗ i , Xn )) where the recursive base case is µ(C(s0 , hE1 , Y1∗ i , X1 , ..., hEn , Yn∗ i , Xn )) = 1, Pe (s0 ; σ≤τ ) is the next-state probability transition matrix given an event e, and He (t; ·, σ≤τ ) is the density function of triggering the event e upon t time units. The enabled events in a state race to trigger first, the event that triggers first causes a transition to a state s0 ∈ X according to the next-state probability matrix for the triggering event. The GSMP is considered as analytically intractable and the probability measure formulation is not at all intuitive. 3 Learning stochastic timed automata We shall now present a novel algorithm for learning GSMP from sample executions (fully detailed in [6,7]), where the GSMP are processes generated by stochastic timed automata. In order to ensure the correctness of our algorithm, we define first an inclusion relation between the prefix tree and the sta. Next, we define the similarity relation between the states, and lastly we describe the algorithm for the merge of compatible states which is commonly called state merge. 3.1 The inclusion relation and the state relation Before introducing the definitions (1) and (2), we need to define two auxiliary functions to simplify the notation of the relation between paths and the prefix tree, as follows: – τ (s, x) gives the set of feasible events of a given event sequence x from a prefix tree P t(P ath(σ≤τ )), {y ∈ E | δ(δ(s, x), y) 6=⊥}, for instance, from a set of sequences {x a, x b, ...} we get {a, b, ...}, and – ν(σkX [s, i]) maps a state σkX [s, i] to u, where u ∈ Q is a sequence of events accepted by the prefix tree P t(P ath(σ≤τ )). One says that a prefix tree P t(P ath(σ≤τ )) is a particular case of a GSMP, or in other words a sta. However, only the relation between the data structures is ensured with this definition, we shall need to establish a correction of the state merge algorithm as well (as we will see later). Definition 1. The prefix tree P t(P ath(σ≤τ )) = (F, Q, ρ, %, δ), denoted P tsta, for a set of multiple paths P ath(σ≤τ ) is a particular sta, P tsta(P ath(σ≤τ )) = (X , E, Γ , p, p0 , G) where X = Q; E is the set of single and unique events in the F set; Γ (si ) = τ (s, ν(si )); p(s0 ; s, e∗ ) = 1 if δ(ν(s), e∗ ) 6=⊥ and ν(s0 ) 6=⊥, otherwise p(s0 ; s, e∗ ) = 0; p0 (s) = 1; and G is a set of distributions estimated by sample clocks associated on each event, given by the function %. The P tsta(P ath(σ≤τ )) is a GSMP consistent with the sample in P ath(σ≤τ ). For all paths with prefix σ≤τ there exists a corresponding execution in the GSMP that produces the same path. Now, we introduce the notion of a stable equivalence relation that establishes the similarity between states. This relation, that is applied statistically, allows the creation of a more abstract model from a set of paths P ath(σ≤τ ). The size of the model at each equivalence between states is reduced. Definition 2. Let M = (X , E, Γ , p, p0 , G) be a sta, a relation R ⊆ X × X is said to be a stable relation if and only if any s, s0 have the following three properties, |Γ (s)| = |Γ (s 0 )| (1) there is a one to one correspondence f between Γ (s) and Γ (s 0 ), if ∃e ∈ E and ∃ n ∈ X such that p(n; s, e) > 0, then 0 0 0 0 (2) 0 ∃ n ∈ X such that p(n ; s , f (e)) > 0, G(s, e) ∼ G(s , f (e)), and (n, n ) ∈ R and if ∃e ∈ E and ∃n, n0 ∈ X such that n 6= n0 , p(n; s, e) > 0 and p(n0 ; s, e) > 0 then p(n; s, e) ≈ p(n; s0 , e) and p(n0 ; s, e) ≈ p(n0 ; s0 , e) (3) where |Γ (s)| is the number of active events in the state s, p is a probabilistic transition function, G is a probability distribution function, and the tilde (∼) and double tilde (≈) notations denote ”with same distribution” and ”with same probability” respectively. Two states s and s0 of M are said equivalent s ≡ s0 if and only if there is a stable relation R such that (s, s0 ) ∈ R. A concrete example is now described for the application of the definition (2). For instance, suppose that we have |Γ (s)| = |Γ (s 0 )| = 2, Γ (s) = {a, b}, and Γ (s 0 ) = {c, d }. The equation (1) is trivially satisfied, i.e., the feasible event set have the same size. However, the equation (2) and (3) are not trivially satisfied. To be satisfied we need to conclude that G(s, a) ∼ G(s0 , c) and G(s, b) ∼ G(s0 , d), or G(s, a) ∼ G(s0 , d) and G(s, b) ∼ G(s0 , c) is true, if G(s, a) ∼ G(s, b), G(s, a) ∼ G(s0 , c) or G(s, a) ∼ G(s0 , d) then p(n; s, a) ≈ p(n0 ; s0 , b), p(n; s, a) ≈ p(n0 ; s0 , c), p(n000 ; s, a) ≈ p(n000 ; s0 , d) respectively, otherwise a test for two Bernoulli distributions p is not necessary [3], and all states reachable by s and all states reachable by s0 must also form a stable relation, i.e., the next states of (s, s0 ) also have to satisfy these three properties.4 3.2 Testing statistically the similarity of states The similarity test follows the same scheme of algorithms RPNI [17] and ALERGIA [3], except for: the compatible function which incorporates a different statistical test structure, there is an estimator for unknown new clocks, and there is an event distribution estimator. 4 In the definition (2) the real event identifiers are not necessary but we need to know that the sets of feasible events have associated for each event the same distribution. Algorithm 1: Testing statistically the similarity of states (T3S) input : A set of paths with prefix σ≤τ , P ath(σ≤τ ), and a type I error α between [0; 1]. output: A sta M. M = Ptsta (scheduler estimator(P ath(σ≤τ ), P t(P ath(σ≤τ )))) ; attempt ← 1; while attempt > 0 do attempt ← 0; C ← clusterize(M); for n ← 1 to |C| do for k ← 1 to |C n | do x ← k + 1; n while C n,x 6= C n,|C | do if is active(C n,x ) then if similar(C n,k , C n,x , α) then dmerge(M, C n,k , C n,x , ·, ·); inactivate(C n,x ); attempt ← attempt + 1; // See definition (1) x ← x + 1; M = infer distributions(M); The algorithm 1 together with the auxiliary functions scheduler estimator, similar, and infer distributions establish a new methodology to learn GSMP, which are processes that hold a semi-Markov property. We call the presented solution model identification in the limit. The algorithm 1 has notations associated to the ordered set of clusters and also between these cluster elements, as follows: – the set of n ordered clusters C, classified by events, are denoted by C n , and – C n,k is the k th element of cluster C n , for each 1 ≤ n ≤ |C| and 1 ≤ k ≤ |C n |. The clustering function clusterize produces groups of elements C with a selection based on the feasible event set τ (s. ) for each state s. of M, where M at first attempt is equal to Ptsta (P t(P ath(σ≤τ ))). The is active and inactivate functions allow that only the prefix tree nodes that were not merged are used, and the function similar tests the similarity between two feasible event sets τ (C n,k ) and τ (C n,x ). The testing statistically the similarity of states (T3S) algorithm is subdivided in three blocks. The first block is composed by a clusterize function that clusters the states with an equal active event set (the function τ ). The clusterize function establishes a plain static equivalence between states, nevertheless we need to establish a while cycle with attempt > 0 to cover the other cases such as when dmerge changes the clock samples of the similar states. With this clusterize function we guarantee equation 1, which says that only states with event sets of the same size can be merged. In the second block we use the similar function to test when two states are similar. This function is defined as similar and it uses the Kolmogorov-Smirnov test [8, p. 552] to decide if two empirical probabilistic distributions are equal. It verifies whether there exists a one to one correspondence of events between two active event sets through a statistical equivalence. If there is a correspondence for all events of an active event set, the equation 2 is satisfied. Lastly, the algorithm 1 merges the equal states by the function composed by equation 7. It initializes Function scheduler estimator(P ath(σ≤τ ), P t(P ath(σ≤τ ))) input : A P ath(σ≤τ ) with initial state s, and a P t(P ath(σ≤τ )). output: The P t(P ath(σ≤τ )) with replaced old clocks by original values of clocks. for n ← 1 to |P ath(σ≤τ )| do for l ← 2 to |σ n | do for x ← 0 to l − 1 do // Decrement p p ← l − x; if σ n kE [s, l] 6∈ τ (ν(σ n kX [s, p])) and |τ (ν(σ n kX [s, p]))| ≤ 1 and σ n kE [s, p] = σ n kE [s, l] then break; if p > 1 then p ← p + 1; if σ n kX [s, p] 6= σ n kX [s, l] then Val ← 0; for t ← p to l do // Estimating Val ← Val + σ n kG [s, t]; n n if σ kX [s, t] = σ kX [s, l then break; replace(P t(P ath(σ≤τ )), ν(σ n kX [s, l]), Val); the construction of the sta. This function defined according to the equation 7 solves the problem of non-deterministic merge of states when two states have the same set of events. Inferring the state age structure. The considered stochastic process, the GSMP, requires a state age memory [4,10]. This state age structure, normally identified as a scheduler, allows the use of different continuous distributions for each inter-event time, i.e., the inter-event times between events of a GSMP are not equal. This is not true in CTMP where all inter-event times follow an exponential distribution. The scheduling of events is a data structure that allows the calculation of the next event to be triggered. We introduce the notion of scheduler estimation in order to calculate the history of clock values for each event. Thus, we reconstruct values sampled from new clocks to estimate the events distribution of the model that produces those executions. For instance, suppose that we have two events a and b that can be triggered in a state s0 , where s0 is the initial state of the model, and there are two random variables Xa ∼ E(0.2) and Xb ∼ W (1, 0.1) associated to each event. The events a and b begin labeled as new clock and therefore two samples are given by random variables, respectively, Xa and Xb . Given the samples xa = 1.2 and xb = 0.5 from their respective distributions, the event b wins. Next, the clock value of event b is subtracted and is stored with value 1.2 − 0.5 = 0.7 and a new clock is sampled to b. Then, the event a wins with value 0.7 versus the event b with new clock 1.4. Therefore we can calculate the original value of the event a from the produced sample execution {s0 , (b, 0.5), s1 , (a, 0.7), ·} adding inter-event times between a and b, 0.5 + 0.7 = 1.2. So, we can say that the value sampled in state s0 to the event a has the value 1.2, which is true. Although this scheme can be extended recursively to any finite sample execution, we need to clearly identify the new and old clocks for any path. In order to check the definition (2), only the new clock samples are suitable to predict the distributions associated to each event i. The estimation process happens essentially due to the existence of the map function ν (defined in 3.1). The function scheduler estimator has a particular notation of order in a set of paths P ath(σ≤τ ) with prefix σ≤τ that is described, as follows: σ n is the nth path P ath(σ≤τ ), where 0 < n ≤ |P ath(σ≤τ )|, and σ n,l is the lth piecewise of path n, where 0 < l ≤ |σ n |, where symbols (’|’) denotes the size of a variable that is between these symbols. We explain in the following how function scheduler estimator estimates original sample clock values. First, the algorithm begins by traversing each path of sample executions set in a bottom-up order to know if the current event can be triggered by a clock with a label ”new clock” or an ”old clock”. In this step, we know that an old clock is valid when the successor nodes have this event activated, otherwise it is as ”inactive clock”. The algorithm goes to the predecessor node of the current node recursively, always in one sample execution, until we have found a possible inactive clock. When an inactive clock is found for the current event, in state s. , this implies that this event e cannot be in τ (s. ), which is an active event set for a state s. . Therefore, even in the worst case, the first state (s0 ) of the sample execution can always be found. Given this element we can reconstruct the original clock value by the sum of the values between the found state (s. or s0 ) and the current state. Lastly, we replace the old clock value by the estimated original clock value. Establish the similarity test of states. The similarity between two active event sets Γ1 and Γ2 within the type I error α is solved by the function similar. Thus, the Kolmogorov-Smirnov test (K-S test) [8, p. 552] is applied to test if two distributions are or are not the same (i.e., compare two empirical cumulative distribution functions). Let {Xn }n≥1 and {Yn }n≥1 be two independent successions of independent real random variables with common distribution functions, respectively F1 and F2 . The K-S test allows testing two hypothesis, H0 : F1 (x) = F2 (x), for all x ∈ R against (4) H1 : F1 (x) 6= F2 (x), for some x ∈ R using the statistical test, r Tn1 ,n2 = n1 n2 sup |Fn1 (x) − Fn2 (x)| n1 + n2 x∈R (5) where Fn1 and Fn2 denotes respectively the empirical distribution functions associated to the samples (X1 , ..., Xn1 ) and (Y1 , ..., Yn2 ). The random variable Tn1 ,n2 converges to the Kolmogorov distribution whose values are tabled in [8, p. 555]. For a significance level α we reject H0 when the observed value Tbn1 ,n2 of the test statistic for the particular samples (x1 , ..., xn1 ) and (y1 , ..., yn2 ) exceeds the value Kα , with G(kα ) = 1 − α. The two empirical cumulative distributions Fn1 and Fn2 are estimated using the function T . This function estimates the distribution from a set of sample clocks and is defined, as follows: clock value of z1 , z2 , ..., zn that are ≤ x (6) Tn (x) = N where x is the threshold of the cumulative function, and zi for all events i ∈ D and D ⊆ E are the sample clock values. Function similar(s0 ,s00 ,α) input : Two states s1 and s2 , and a type I error α. output: Boolean, true if it is similar, or otherwise false. Γ1 ← τ (s1 ); Γ2 ← τ (s2 ); if |Γ1 | 6= |Γ2 | then return false; for each e1 in Γ1 do while |Γ2 | > 0 do e2 ← get(Γ2 ); Fn1 = T (%(s1 e1 )); Fn2 = T (%(s2 e2 )); if q n1 n2 n1 +n2 sup |Fn1 (x) − Fn2 (x)| > Kα then x if similar(δ(s1 e1 ), δ(s2 e2 ), α) 6= true then return false; continue; put(Γ2 , e2 ); for each e1 , e2 in Γ1 such that q s1 e1 ∼ s1 e2 do 1 2 √1 √1 if |%(s1 e1 ) − %(s1 e2 )| > then 2 log α n1 + n2 return false; if |Γ2 | < 1 then return true; else return false; The function similar begins by comparing two feasible event sets Γ1 and Γ2 . The comparison is made by establishing a one to one relation between events in feasible sets. If the relationship between events is complete then the states are similar and so it allows equation 2 to be checked. Another particularity in this algorithm is when two events have the same ’id’ in the feasible event set, for two states respectively. This indicates that the event is triggered as e but there are different probabilities in the transition probability matrix. To solve this, we construct a hypothesis test for two Bernoulli distributions using Hoeffding bounds [3] in order to know if the occurrence probabilities are the same (i.e., satisfies equation 3). This method is similar to the one described in [13]. The method checks if the means %(s1 e1 ) and %(s1 e2 ) of two Bernoulli distributions are statistically different or not. The deterministic merge function. The existence of equal feasible event sets (Γ (s) = Γ (s 0 )) creates a non deterministic choice when merged. This problem can be solved applying a deterministic merge function, as follows: While ∃s, x ∈ Q and ∃e ∈ E such as s0 , s00 ∈ σ(s, x e), merge(s0 , s00 ) (7) The merge shall be made recursively until no more non-deterministic event transitions occur. In the T3S algorithm this is named as dmerge function. We describe with a brief example the application of the equation 7. Let two non-deterministic transitions from s1 and s2 labeled with same event e, τ (s, x ν(s0 )) = {e} and τ (s, x ν(s00 )) = {e} respectively. Supposing that we merge s0 in s00 we get a new non-deterministic choice between s1 and s01 until to the end of the paths. Therefore, we need to apply the merge recursively until there are only deterministic choices. Inferring event distributions using maximum likelihood. And now, to conclude the learning method, we need to introduce the concept of distribution discriminant and its selection criteria. Given a prefix tree with all the similar states merged, we need to estimate the parameters of each empirical distribution Function infer distributions(M) input : A deterministic sta M. output: A deterministic sta M with associated random variables and those distributions. for each n in Q such that removed[n] = 0 do for each eRin τ (s, n) do Ge ← 0∞ arg max {ln [Ld (%[n e])]}; fd ∈D of each event that best fits the sample data. For this, the maximum likelihood estimator (MLE) and selection criteria, such as maximum log likelihood, are needed [9]. In order to test the validity of the selection model, a goodness of fit test could be applied (e.g., X 2 ). We present the function infer distributions that estimates the distribution parameters using the maximum likelihood estimator (MLE) for continuous distributions such as: Exponential, Weibull and Log-Normal. However, there are other continuous distributions, such as: Rayleigh, Normal (with non negative part), that we have not described in detail in this paper, but that can be applied in this estimator. The log likelihood Ld of a distribution fd is defined by n X ln [fd (xi | θ)] (8) ln [Ld (θ | x1 , ..., xn )] = i=0 where θ is the set of parameters for a distribution fd , and x1 , ..., xn are samples to be measured. MLE of fd is composed by the maximization of likelihood function Ld with respect to the set of parameters θ which are parameters used in the following criterion. The maximum log likelihood criterion selects the model that best fits the data from the different estimations of distributions with maximum likelihood [9]. This selection criteria is defined by the maximum value of the calculated log likelihood, i.e., ln [Ldm ] > max {∀d ∈ D s.t. d 6= dm then ln [Ld ]} (9) where D is a set of distributions in analysis, and ln [Ld ] the log likelihood of distribution d. The distribution with maximum likelihood is denoted by dm ∈ D. So, we need two or more distributions to make a decision. Note that distributions of set D are distributions with a parameter or a set of parameters estimated by using the MLE method. By this means we estimate the distribution that, in the limit, is more similar to the distribution that produce these samples to learn. 4 Model identification in the limit The correctness argument for the proposed learning algorithm can be defined in terms of correct model identification. For such, we need to show that the produced GSMP is similar to the model that was used to generate the samples. There are therefore three conditions or clauses for correct model identification: 1. the prefix tree constructed by sample executions provided by a GSMP, P t(P ath(σ≤τ )), is also a GSMP. 2. the sample executions to learn have the minimal information necessary to form the model. 3. the P t(P ath(σ≤τ )) with state merge, in the limit, converges to one similar model that identifies P ath(σ≤τ ). Since the definition 1 is correct by construction and assuming a structurally complete sample, the correctness of the learning algorithm depends essentially on the correctness of the state merge procedure. From definition 1 the first clause is ensured and therefore only the other two clauses need to be guaranteed. For the second clause, we need to ensure that the sample executions to learn form a structurally complete sample (SCS). This is known as the problem of insufficient data training and when this occurs it is obviously impossible to learn the model that produces an incomplete set of sample executions. For the third clause, we need to ensure that, in the limit, the error of merging two non equivalent states tends to zero. Note that the error of merging two non equivalent states is guaranteed by the K-S test. With these three clauses satisfied, we can prove that the model that is learned by the algorithm, in the limit, and behaves as the original. Ensuring a structurally complete sample. Commonly used methods to achieve a structurally complete sample, like reachability analysis, are not enough when the model is not known. In this case acquiring a SCS is a big challenge. The selection of termination probability for a sample execution can be used as a method to achieve a SCS in known and unknown models. However, the probability measure of a path from an unknown model is not trivially assured. A SCS is a sample composed by a set of paths that explores every possible transition and every reachable state. This structure solves a common problem known as insufficient data training to learn a model, i.e., only with paths of infinite size can one guarantee that for any model, the learned model eventually converges to an equivalent. With a SCS, we ensure that the minimum information needed to learn a model from sample executions is achieved. In order to ensure that a set of paths relying on SCS, we introduce a termination probability pt as a solution. The simulation technique is described, as follows: 1) simulate the SDES M , 2) terminate when probability measure of a path σ≤τ of execution is less than pt , i.e., µ(C(σ≤τ , hEk , Yk∗ i , Xk , ..., hEn , Yn∗ i , Xn )) < pt , and 3) apply recursively the steps 1 and 2 to generate more sample executions. We simply note that the solution method based on termination probability has weaker correctness guarantees than reachability analysis. It also places a greater responsibility on the user, who has to choose a good value for pt . The automatic achievement of pt is not trivial. The state merge error, in the limit, converges to zero. Assuming that the first two correctness clauses are satisfied then the learning algorithm can only make errors when testing the similarity between two states. In addition, the errors α and β between two event distributions of the K-S test are defined, as follows: . α is the type I error of H0 be rejected, where in fact H0 should not be rejected, and . β is the type II error of H1 be accepted, where in fact H1 should be rejected. Hence this means that the state merge errors αs and βs are defined by the multiplication of the errorsQmade in the comparison of each event distribution Qk k αs = i=1 αi and βs = i=1 βi , where k is the number of similar events. ∗ Moreover, the model errors α and β ∗ are equal Qn to the multiplication Qn of the error αs and βs used for each state merged α∗ = i=1 αs [i] and β ∗ = i=1 βs [i], where n is the number of merged states. We present, in the following, two propositions about the bounds of type II error. Proposition 1. Suppose the Kolmogorov-Smirnov test for two samples with size n1 e n2 respectively, and a significance level α. For sufficiently large samples, i.e., when n1 → ∞ and n2 → ∞, β tends to zero. In the following we present a sketch of the proof. The proof of this proposition is based on the following facts: by the theorem of Glivenko-Cantelli when H0 is true and n1 and n2 tend to infinity, sup |Fn1 (x) − Fn2 (x)| converges certainly x∈R to zero. So, from the uniqueness of the limit, when H0 is true and n1 → ∞, q n2 sup |Fn1 (x) − Fn2 (x)| tends certainly to +∞. n2 → ∞, we have that nn11+n 2 x∈R Therefore, in the validity of H1 , the probability of rejecting H0 tends to 1, which was to be demonstrated. It is known that the convergence of k-S test is exponential [24]. Moreover, the reader can find a detailed account to β error boundaries and correctness arguments as presented here in [14]. Proposition 2. If the type II error β, in the Qklimit, for the K-S test converges to zero, a multiplication of the type II error i=1 βi , in the limit, also tends to zero. This proposition is trivially satisfied. Given the limit law of multiplication, we know that the limx→a f (x) · g(x) = limx→a f (x) · limx→a g(x). Then, because f (x) = g(x), the limit is maintained. 5 Tool and proof of concept The implementation of the learning algorithm is the basis of the SDES toolbox, that allows the learning and analysis of a set of case studies, such as: task schedulers, land mobile satellite communication systems, and network traffic model estimation. In order to illustrate the learning process, we use as an example a scheduler for a multi-processor system and show how the proposed method can learn a model that can be used for further analysis. SDES Toolbox. We have developed a SDES toolbox5 in C and C++ language that implements the presented learning approach. The toolbox was developed to analyze and learn generalized semi-Markov processes. It also supports the model description by an event-driven language that can be directly used as the input model language to a GSMP model checker [21]. 5 Available from http://desframework.sourceforge.net/ a , ab, c b A, bc, a AB, c, b c c init; 1/3 start , , abc , ac, b , bc, a b AC, b, init; 1/3 init; 1/3 c c ABC, , b a a b C, ab, a B, ac, c BC, a, Convergence analysis Performance analysis Number of states Time (s) 6 4 2 0 102 103 Number of samples 104 10 5 0 0 200 400 600 800 Number of samples 1,000 Fig. 1. Learning GSMP of a multi-processor system scheduler with uncertainty Stochastic analysis of a scheduler for a multi-processor system. An optimal scheduler design for a multi-processor system with uncertainty in task duration is difficult to achieve and a significant challenge [18]. In figure 1, we present the model from which it is possible to derive, statistically, answers about the worst case sequence and the optimal case sequence of a two-processor scheduler system. In this system there are two processors that can run two tasks at the same time. Supposing that there are three tasks {a, b, c}, only two tasks can be run at the same time and the other one only when one of the tasks is finished. The model of this system has eleven states which describe the state of the two processors and tasks at any given time. The scheduler can initially make three choices, (a, b), (a, c), or (b, c). The event init of the model, representing these choices is: p([, ab, c]; [, , abc], init) = 13 , p([, ac, b]; [, , abc], init) = 13 , and p([, bc, a]; [, , abc], init) = 13 respectively. These choices bind the time (i.e., worst and optimal) of the execution for these three tasks. If we have a scheduler that is completely random (i.e., the probability of events {ab, ac, bc} are equiprobable) then we select the path with maximum probability which means that it is the better sequence. Thus, if we have a scheduler that begins with the optimal tasks then we will have an optimal scheduler for these tasks. However, we need to distinguish two situations, as follows: if only exponential distributions are used then the choice is easy, the rate of distribution identifies the order (the lower expected value is the more probable), but if on the other hand we have different continuous distributions then the ordering selection is not so trivial. This will be the case for this example that our method will solve. Namely using the distributions init : Tinit ∼ Exponential(1), a : Ta ∼ W eibull(0.1, 1), b : Tb ∼ Exponential(0.4), and c : Tc ∼ Log-N ormal(0, 0.25), respectively. Given the sample executions that form a SCS, we have compared the performance and convergence of our algorithm given an increasing number of sample executions, see figure 1. We can see in the convergence graph that for one thousand sample executions, the model converges into a model with same number of states. According to the correctness of our learning algorithm, we have guaranteed that if the umber of samples grows infinitely then the model converges to the original model. Notice that in fact in this example we verify that the model learnt by our algorithm with approximately nine hundred sample executions has the same event language of the original model. This experiment was made on a machine with an Intel Core 2 Duo CPU T7500 @ 2.2Ghz processor with 4Gb of memory. An interesting point in this model is that the path with the greatest probability to occur is the optimal case execution and the path with the lowest probability is the worst case execution, when we have a random scheduler. 6 Conclusion and Future Work To the best of our knowledge, this is the first learning algorithm that is able to cope with GSMP learning of deployed stochastic discrete event systems for which we do not know the model before-hand. The learning algorithm can be used to verify the deployed systems using existing probabilistic model-checking tools. We also have developed a toolbox for Matlab that applies the techniques described in this paper. We have shown with our experiment that this type of model is really capable and scalable. We can use it not only for the analysis of a computer system but also to verify or test it. However, one of the limitations of our work is that it may not scale up for systems having large stochastic timed automata. Development of techniques that allow the approximate verification while the model is learned may be the solution. Acknowledgments We would like to thank to Ana Paula Martins for the very constructive discussions about the statistical properties of the proposed T3S algorithm. References 1. Benedikt Bollig, Peter Habermehl, Carsten Kern, and Martin Leucker. Angluinstyle learning of nfa. In Proceedings of the 21st international jont conference on Artifical intelligence, IJCAI’09, pages 1004–1009, San Francisco, CA, USA, 2009. Morgan Kaufmann Publishers Inc. 2. Benedikt Bollig, Joost-Pieter Katoen, Carsten Kern, Martin Leucker, Daniel Neider, and David R. Piegdon. libalf: The automata learning framework. In CAV, pages 360–364, 2010. 3. Rafael C. Carrasco and Jose Oncina. Learning deterministic regular grammars from stochastic samples in polynomial time. RAIRO (Theoretical Informatics and Applications, 33:1–20, 1999. 4. Christos G. Cassandras and Stephane Lafortune. Introduction to Discrete Event Systems. Springer-Verlag New York, Inc., Secaucus, NJ, USA, 2006. 5. Alexandre David, Kim G. Larsen, Axel Legay, Marius Mikucionis, and Zheng Wang. Time for statistical model checking of real-time systems. In CAV, pages 349–355, 2011. 6. Andr´e de Matos Pedro. Learning and testing stochastic discrete event systems. Master’s thesis, Universidade do Minho, Portugal, December 2011. 7. Andr´e de Matos Pedro and Sim˜ ao Melo de Sousa. Learning generalized semimarkov processes: From stochastic discrete event systems to testing and verification. Technical Report DCC-2012-01, Department of Computer Science, University of Porto. 8. Morris H. DeGroot. Probability and Statistics. Addison Wesley, 2nd edition, 1989. 9. Arabin Kumar Dey and Debasis Kundu. Discriminating among the log-normal, weibull, and generalized exponential distributions. IEEE Transactions on Reliability, 58(3):416–424, 2009. 10. P. W. Glynn. A gsmp formalism for discrete event systems. Proceedings of The IEEE, 77:14–23, 1989. 11. E. Mark Gold. Language identification in the limit. Information and Control, 10(5):447–474, 1967. 12. Mor Harchol-Balter and Allen B. Downey. Exploiting process lifetime distributions for dynamic load balancing. ACM Trans. Comput. Syst., 15:253–285, August 1997. 13. Christopher Kermorvant and Pierre Dupont. Stochastic grammatical inference with multinomial tests. In Proceedings of the 6th International Colloquium on Grammatical Inference: Algorithms and Applications, ICGI ’02, pages 149–160, London, UK, UK, 2002. Springer-Verlag. 14. Jerome Klotz. Asymptotic efficiency of the two sample Kolmogorov-Smirnov test. Journal of the American Statistical Association, 62(319):932–938, 1967. 15. Axel Legay, Benoˆıt Delahaye, and Saddek Bensalem. Statistical model checking: An overview. In RV, pages 122–135, 2010. 16. Ming-Wei Lu and Cheng Julius Wang. Weibull data analysis with few or no failures. In Hoang Pham, editor, Recent Advances in Reliability and Quality in Design, pages 201–210. Springer London, 2008. 17. Rajesh Parekh and Vasant Honavar. Learning dfa from simple examples. Machine Learning, 44(1/2):9–35, 2001. 18. Michael L. Pinedo. Scheduling: Theory, Algorithms, and Systems. Springer Publishing Company, Incorporated, 3rd edition, 2008. 19. Koushik Sen, Mahesh Viswanathan, and Gul Agha. Learning continuous time markov chains from sample executions. In Proceedings of the The Quantitative Evaluation of Systems, First International Conference, pages 146–155, Washington, DC, USA, 2004. IEEE Computer Society. 20. Wei Wei, Bing Wang, and Don Towsley. Continuous-time hidden Markov models for network performance evaluation. Perform. Eval., 49:129–146, September 2002. 21. H˚ akan L. S. Younes. Ymer: A statistical model checker. In CAV, pages 429–433, 2005. 22. H˚ akan L. S. Younes, Edmund M. Clarke, and Paolo Zuliani. Statistical verification of probabilistic properties with unbounded until. In SBMF, pages 144–160, 2010. 23. Hakan Lorens Samir Younes. Verification and planning for stochastic processes with asynchronous events. PhD thesis, Pittsburgh, PA, USA, 2004. 24. C. S. Yu. Pitman efficiencies of Kolmogorov-Smirnov test. The Annals of Mathematical Statistics, 42(5):1595–1605, 1971.

© Copyright 2026